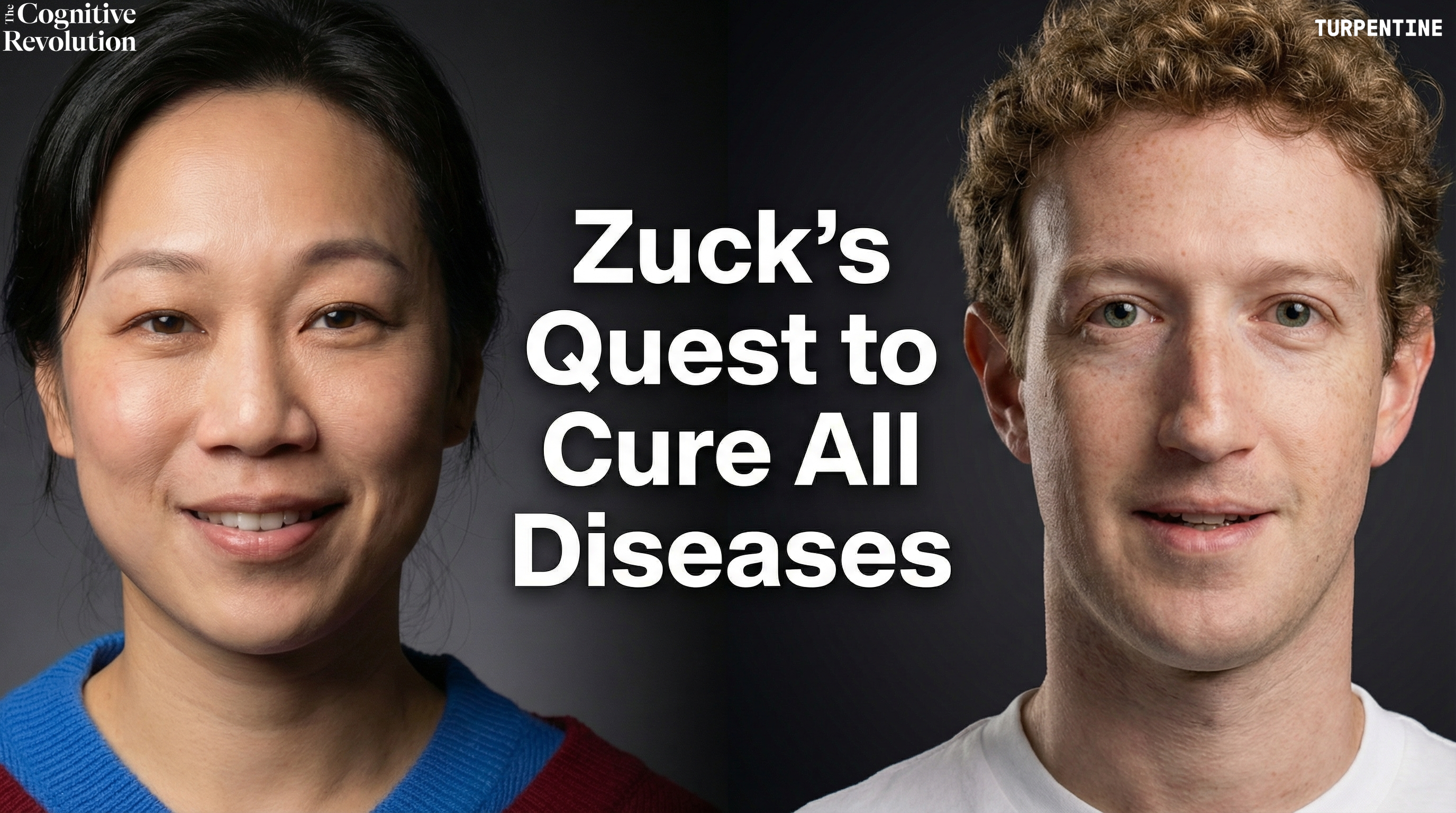

The AI-Powered Biohub: Why Mark Zuckerberg & Priscilla Chan are Investing in Data, from Latent.Space

Mark Zuckerberg and Priscilla Chan join Latent Space to discuss the Chan Zuckerberg Initiative's expanded Biohub, integrating frontier biology and AI labs to build a virtual cell, scale biological data, and advance precision medicine and drug discovery.

Watch Episode Here

Listen to Episode Here

Show Notes

This crossover episode from the Latent Space podcast features Mark Zuckerberg and Priscilla Chan on the 10-year anniversary of the Chan Zuckerberg Initiative and their expanded Biohub vision. They discuss how a “Frontier Biology Lab” working in sync with a “Frontier AI Lab” could enable breakthroughs like a Virtual Cell and true N-of-1 precision medicine. The conversation covers the acquisition of Evolutionary Scale and ESM3, new biological data collection at scale, and how AI-powered biology might transform drug discovery and disease prevention.

Sponsors:

Blitzy:

Blitzy is the autonomous code generation platform that ingests millions of lines of code to accelerate enterprise software development by up to 5x with premium, spec-driven output. Schedule a strategy session with their AI solutions consultants at https://blitzy.com

Framer:

Framer is an enterprise-grade website builder that lets business teams design, launch, and optimize their.com with AI-powered wireframing, real-time collaboration, and built-in analytics. Start building for free and get 30% off a Framer Pro annual plan at https://framer.com/cognitive

Serval:

Serval uses AI-powered automations to cut IT help desk tickets by more than 50%, freeing your team from repetitive tasks like password resets and onboarding. Book your free pilot and guarantee 50% help desk automation by week four at https://serval.com/cognitive

Tasklet:

Tasklet is an AI agent that automates your work 24/7; just describe what you want in plain English and it gets the job done. Try it for free and use code COGREV for 50% off your first month at https://tasklet.ai

CHAPTERS:

(00:00) About the Episode

(04:27) CZI origins and focus

(08:29) Why tools over cures

(14:43) Virtual cells and imaging (Part 1)

(20:19) Sponsors: Blitzy | Framer

(23:24) Virtual cells and imaging (Part 2)

(25:22) Data diversity and grounding

(32:30) Evaluating models and Biohub (Part 1)

(37:53) Sponsors: Serval | Tasklet

(40:42) Evaluating models and Biohub (Part 2)

(41:06) Future healthcare and aging

(53:39) Modeling scales and immunity

(58:53) Timelines, data, and collaboration

(01:04:01) Outro

PRODUCED BY:

SOCIAL LINKS:

Website: https://www.cognitiverevolution.ai

Twitter (Podcast): https://x.com/cogrev_podcast

Twitter (Nathan): https://x.com/labenz

LinkedIn: https://linkedin.com/in/nathanlabenz/

Youtube: https://youtube.com/@CognitiveRevolutionPodcast

Spotify: https://open.spotify.com/show/6yHyok3M3BjqzR0VB5MSyk

Transcript

This transcript is automatically generated; we strive for accuracy, but errors in wording or speaker identification may occur. Please verify key details when needed.

Full Transcript

(00:00) Nathan Labenz:

Hello, and welcome back to The Cognitive Revolution. Today, I'm excited to share a special crossover episode from the Latent Space podcast. Latent Space, surveys say, is the number one podcast for AI engineers, and I find hosts Swyx and Alessio a consistently outstanding source of insight into the latest trends in AI-powered coding and AI application development. Today's episode, though, is a bit different. A conversation with Mark Zuckerberg and Priscilla Chan, who are celebrating the 10-year anniversary of the Chan Zuckerberg Initiative, about why they are doubling down on the interdisciplinary Biohub with the goals of leading a new era of AI-powered biology and ultimately equipping scientists to cure or prevent all disease in the coming decades. In this conversation, Mark and Priscilla describe their perspective on the current state of biology, the role they see AI playing going forward, and the strategy underlying their investments. With highlights including why the traditional funding model fails to bring scientists, engineers, and AI experts together to tackle the most important problems in the way we might hope. Their vision for a frontier biology lab that works in sync with a frontier AI lab. The acquisition of Evolutionary Scale, creators of leading protein model ESM3, and the appointment of CEO Alex Rives to lead the combined program. Their plan to develop new data collection techniques, which will naturally give rise to massive datasets on which new AI models can be trained. The roadmap to a virtual cell capable of simulating biological responses in silico, potentially revolutionizing not just drug discovery but our understanding of biology in general. And their ultimate vision for precision medicine, moving from clinical trial and error to true N-of-1 treatments designed based on each individual's unique biology. While the conversation itself focuses on the intersection of AI and biology, for me, it also serves as an important reminder of the unique role that private capital often plays in scientific progress. And considering the current moment, the importance of classical liberal values more broadly. Ironically, for all we hear that the US must win the AI race to ensure that the best AI models project American rather than Chinese values around the world, I see actors across the political spectrum pushing America toward a more Chinese model of state dominance. The civil rights violations and abuses of power we're seeing right now from the federal government are plainly un-American. And I've been glad to see prominent voices in the AI space, including Jeff Dean at Google, Dario and Chris Olah at Anthropic, and various researchers at OpenAI speaking up against them. If anything, I think the AI industry ought to consider doing more, starting by signaling that they would be willing to withhold their technology from a government that proves itself unworthy to wield such power. But at the same time, and certainly to a much lesser degree right now, I do also worry that recent proposals for confiscatory taxes, if enacted, would make moonshots like the Biohub much rarer. I do agree, of course, with Warren Buffett that it's absurd that he pays a lower tax rate than his secretary. But at a time when the federal government is cutting research budgets and generally acting against medical advice, society stands to benefit tremendously when self-made tech billionaires turn their formidable talents and immense resources to solving global problems and providing public goods. And even more generally, as fears of concentration of AI power grow, it only seems prudent that society should maintain a diverse set of independent power centers, which can hopefully balance and exist in equilibrium with one another. Such checks and balances were, of course, central to the framers' vision for the US government. And in my view, they remain essential for societal dynamism and resilience today. With that, I'm grateful to Swyx for allowing me to cross-post this conversation. I, of course, recommend subscribing to the Latent Space feed where they've just brought on new hosts to cover AI for science on a dedicated basis. And I hope you enjoy this preview of the future of AI-driven biology and medicine with Mark Zuckerberg and Priscilla Chan from the Latent Space Podcast.

(04:28) Alessio:

Hey, everyone. Welcome to the Latent Space Podcast. This is Alessio, founder of Kernel Labs, and I'm joined by Swyx, editor of Latent Space.

(04:34) Swyx:

Hello. We're so delighted to be in the Imaging Institute of CZI with literally C and Z. Welcome, Mark and Priscilla.

(04:41) Priscilla Chan:

Thanks for having us. Thanks for getting nerdy.

(04:44) Mark Zuckerberg:

Yeah. We're excited to do this.

(04:45) Swyx:

We so don't often get to see this side of you, and so thank you for taking some time out to talk about this. And it's sort of the 10-year anniversary of CZI. So I just wanted to introduce people if they have not been caught up. One of the interesting things that we found out just from talking to your teams is there's an interesting difference between how you guys started CZI and the Gates Foundation. And I heard that Bill Gates is a mentor of yours. So maybe you could tell that story of deciding to start CZI and deciding to pursue basic science instead of translational work.

(05:19) Mark Zuckerberg:

Well, I think one of the core things for us with CZI was just getting started earlier. We got some advice that basically philanthropy and doing science, just like any other discipline, requires practice. And you're not going to be good at it overnight. So we should just kind of dig in and start doing a few different iterations on it and see what we enjoy and where we think we can have an impact and go from there. So yeah, like you mentioned, we're coming up on in November the 10-year anniversary of when we started CZI. And there's a lot of work that we're really proud of that we've been a part of, including work in education and supporting communities. But when we reflect on it, we feel like the work that we've done in science really has had the biggest impact and in a lot of ways is accelerating. And with especially all the advances in AI that are coming, I think the ability to have an even bigger impact over the coming decade -- it just seems really clear like this is coming into focus. So for the next period, we really want to make science the main focus of what we're doing. And specifically the Biohub organization that we're really proud of, this model that we've helped pioneer that we can go into detail on, is really going to be the main focus of our philanthropy. And it's just something that we're very excited about.

(06:41) Priscilla Chan:

Yeah. When we started 10 years ago, we had this idea like, okay, I bring experience as a physician. Mark's an engineer, and he builds things. And we have an opportunity to give back resources to make an impact on this world. And we just tried a bunch of things. And the thing that, in running a philanthropy, I'm incredibly envious of people who run companies, is that you guys can have a dashboard and there's financial results, and people tell you if you're on the right track, on the wrong track, and there's clarity. But in philanthropy, there's so much you can do, and it takes a long time for you to get a sense of what has momentum. What are we doing that is actually bringing all of our both skills and resources to maximal impact? So over the past 10 years, I would say we've been getting a sense of what is that thing that really allows us to have the impact and makes the most of what we bring to the table. And it's really been around AI and biology where we're like, oh my gosh, this is it. And the ecosystem is big. We really think our ability to bring great scientists, great AI researchers together between the wet lab and the compute, the ability to bring physicians and patients into the picture, that's a unique niche for us at the Biohub. And we need others to take the work to translation. The Gates Foundation has a strong focus on translation and the field. And we have had a number of really awesome collaborations and continue to, where we really look at the basic fundamental research and being able to partner with someone who's thinking about the translation layer is incredible.

(08:30) Alessio:

We kind of see the first decade, and I would love to get your take, as a decade of creating data, creating a science ecosystem, and then starting to work on some of the models. And the next decade maybe is more of the applied modeling side. At what point did you decide that just doing the tooling would matter versus you could have cured malaria in Africa too or some other disease?

(08:55) Mark Zuckerberg:

I mean, take a step back, and this is kind of related to your first question too. Like Priscilla was saying, the space is huge. There are lots of other philanthropists, including Gates, who I think they would say that they're primarily focused on public health and sort of administering. Like once you know what a cure is, just getting it out to the world is a huge thing too. And someone needs to do that, and that's a lot of work and a lot of resources, and it's good that they're doing that. Basic science is another completely different part of the innovation funnel to enable that. And our view is that the federal government basically dwarfs everyone else in terms of how much they invest through NIH. But there's a certain pattern to how they invest, which is really enabling a lot of individual investigators to do work. And our kind of observation is that if you look at the history of science, a lot of major advances are basically preceded by new tools or new ways of observing things. So the initial telescope allowed a lot of advances in astronomy. The microscope, the invention of that allowed a lot of understanding of biology. And similarly, I think we're at a point in history where a lot of new tools are being built. Computational tools, tools to instrument the body in different ways and understand things. And often that tool development just takes a longer-term timeframe and sometimes a larger commitment of capital, including the way to do it isn't necessarily just to make grants to a lot of different people. You need to really operate it yourself, which I think is one thing that's different about the way that we've operated than others. Most times when you think about philanthropy, you think about kind of giving money away in terms of grants. And a lot of what we're doing is actually building up these institutes and building labs to do that kind of research ourselves by bringing in leading scientists and engineers and all that. But that's kind of the strategy -- we feel like there's a lot of new tools to develop. There's sort of been a hole in the ecosystem where tool development and the 10 to 15 year runway that you need to do that and often hundreds of millions of dollars to build things like the microscopes and imaging that you're seeing in this institute here. I think that that's been sort of underfunded. And that's where we think that if we do that kind of work, it can just give all these other scientists way more tools to accelerate the pace of research, hopefully discover cures, and then you have folks who are focused on public health who bring that out to the world and deploy it to everyone.

(11:25) Priscilla Chan:

Yeah. Our mission is to cure, prevent all diseases, and that's not going to happen just in our four walls. So the strategy has to be how do we make every single scientist and everyone better and more effective? And the strategy Mark talked about is sort of where we landed on how to actually maximally move the field forward.

(11:46) Swyx:

Yeah. The mission is cure, prevent all diseases. By the way, a lot of people outside of the CZI world still find this concept very alien, but talking to the CZI people, they really truly believe it. And it's impressive how you pick the right mission to motivate everyone to work towards this enormous task.

(12:03) Mark Zuckerberg:

Well, it's kind of a funny thing. We like to talk about the mission as helping scientists do it, right? Because we're not actually curing the diseases. We're just trying to build the tools --

(12:13) Swyx:

Data, models.

(12:14) Mark Zuckerberg:

Yeah, basically accelerating scientists' work towards that. But a funny thing about it is we had this initial timeframe of by the end of the century. And when you ask biologists, there's a lot of questions around, okay, that's really ambitious. Are we going to be able to do that? And then when you ask AI people --

(12:34) Swyx:

Yeah, they get --

(12:34) Mark Zuckerberg:

It's like, that should be really easy. Like, why are you so unambitious that you're shooting for just the end of the century? And I do think that at the pace that AI is improving things, it might be possible significantly sooner than that. I don't think it's necessarily worth putting a number on it or a date. But I think it's your point about the first decade -- it was sort of about doing work like the Cell Atlas to be able to help understand basically all of the specifics and data about all the different configurations of every cell in the body. When we did that, we kind of had this vague notion that that would be useful to advance science. But I think like a lot of people in the tech industry, we have even been impressed by how quickly AI has accelerated. But that ended up being a really valuable thing to have done over the last 10 years, especially for where AI is now and the models that can get built with that.

(13:28) Priscilla Chan:

But the thing that's interesting, don't you agree, is like, okay, so from a tech -- I totally agree that in our intersection of AI and biology, the AI folks are like --

(13:37) Swyx:

Yep.

(13:37) Priscilla Chan:

The biologists are like... And I think it's actually that confluence of conversations that leads both the biologists to be like, okay, I'm really uncomfortable about this idea and timeline. But if I'm really pinned down to think about it, what are -- you really force people to think through, okay, what are actually the barriers? What would you need to do? And you're forcing that conversation from the biologist side. And from the AI side, really getting a sense of, okay, data is not just data. You guys know this. You need to know sort of how the data was collected and from where, and being able to connect the AI researchers to the folks who are actually gathering the data on a daily basis makes their work better. And so it's that conversation that's happening here that I think makes people outside so excited about this because it's credible. And they've really dug in and thought through how that would work. And they're excited and they believe, and believing is the first step.

(14:44) Swyx:

Believing is the first step. There's a general pattern of software eating the world, and I think AI eating the world is kind of the next version of this. I was talking with Garrett outside who says he's a biologist, but I think he's using models like SAM from Meta.

(14:55) Priscilla Chan:

You're like, you don't look like only a biologist.

(14:58) Mark Zuckerberg:

What does a biologist look --

(15:00) Swyx:

I know. I mean, he's saying --

(15:01) Priscilla Chan:

Like he's working on models out there, and that's like biologists are working using models, right? They're not just in the wet lab.

(15:09) Swyx:

Yeah. I think one of the key approaches that you're pursuing is turning things in -- pursuing a virtual cell, turning things from mostly wet lab into something in silico. How far along are we?

(15:24) Mark Zuckerberg:

It's pretty early, right? I think the first step, which I think is easy to overlook, is basically what Priscilla was talking about of just getting these folks together. It's worth taking a beat just to talk about this, because I think most people assume that this is like, obviously you would go do that. But it's somewhat novel in science because of the way that a lot of funding has been done. Basically, you grant individual teams relatively small grants and people do a lot of science independently. It is, I think, pretty amazing how much progress you can make if you just have people from different disciplines sit together. Over my career, both at Meta and here, it's like you have teams that are not working together for some reason or they disagree on something. It's like, okay, physically just have them next to each other. And it actually is super helpful. So here, what are we doing? It's not just bringing together the biologists and the engineers, which was a core part of the initial Biohub model, but it was also unlocking the ability for people to work together across institutions. So the first Biohub that we started out here between Stanford, UCSF, and Berkeley allowed a lot more collaboration between scientists and engineers at those universities than was in practice happening before. And you can look at this and be like, all right, that seems really obvious. But it actually was sort of an interesting and novel experiment and one that I'm really happy to see others also implementing because I think it's just such a clear win, just the human side of bringing people together and having them sit together. So anyway, that I would say is kind of step one or step zero and is probably quite overlooked, but is sort of a fundamental part of the model that I guess also goes back to this idea of like, we're not just granting funds to other people. We're building an institution and we're having people sit together. So then you get that. And then you get these people who are like half biologist, half AI engineer because they kind of have some experience doing it. And we can talk through the specific models and there's a lot of exciting stuff there, but I'd say it's an early glimpse of where this is all going. I think you want to kind of build up these models hierarchically. So you give them a lot of data about specific proteins and they can model specific proteins in the cells. And then you can model different cell behavior. And then eventually you kind of zoom out and you're modeling like a virtual immune system or something like that. And it's sort of hard to simulate the immune system without having a good understanding of how a cell might work. And it's kind of hard to understand or simulate how a cell might work if you don't really understand how the proteins interact. So you kind of need systems that understand data at all different levels of this and then you pull them together. And then if you look at the different models, there are versions that are focused on which parts of the genome are being expressed in different ways. And the cryo model that I think is very interesting that's built off of the data here, the only model that I'm aware of that's a spatial model of basically how these cells work. And you kind of want to be able to look at stuff from different perspectives and then put them together and you build a richer and richer model of how these cells work. But we are definitely at the beginning of this journey.

(18:43) Priscilla Chan:

But it's like slow and fast, slow and fast, right? So when we built the Human Cell Atlas, we started 10 years ago. It was one of our first RFAs, and the first RFA was to fund the methodologies of how you would get a single cell transcriptome. And it took us about 10 years to get to a place where we now have one of the largest corpora of RNA transcriptomes, 125 million cells. Cost a lot of money. And the really cool thing we discovered through that process was if we could seed the effort and make it easy for people to contribute, it happened. That's CELLxGENE. We're actually responsible for maybe 25% of the data, and the rest of the ecosystem contributed 75% of that. That's an incredible asset and has been very important in modeling work. Similarly, if you look at AlphaFold, they built off publicly available data that was collected for 30 years prior. So that takes a long time. But now we're doing the billion cell project, and that is taking months and at a fraction of the price. Really slow to fast. But it's a single dimension, and cells are so complicated. And here we're looking, like Mark said, at the three-dimensional imaging structures. And it's slow and expensive. But with the cryo model, it will get fast again. And you just have to repeat it, and so I think we'll get growth spurts, but it's all happening just faster and faster.

(20:14) Mark Zuckerberg:

Hey. We'll continue our interview in a moment after a word from our sponsors.

(20:19) Nathan Labenz:

Want to accelerate software development by 500%? Meet Blitzy, the only autonomous code generation platform with infinite code context. Purpose-built for large, complex, enterprise-scale codebases. While other AI coding tools provide snippets of code and struggle with context, Blitzy ingests millions of lines of code and orchestrates thousands of agents that reason for hours to map every line-level dependency. With a complete contextual understanding of your codebase, Blitzy is ready to be deployed at the beginning of every sprint, creating a bespoke agent plan and then autonomously generating enterprise-grade, premium-quality code grounded in a deep understanding of your existing codebase, services, and standards. Blitzy's orchestration layer of cooperative agents thinks for hours to days, autonomously planning, building, improving, and validating code. It executes spec and test driven development done at the speed of compute. The platform completes more than 80% of the work autonomously, typically weeks to months of work, while providing a clear action plan for the remaining human development. Used for both large-scale feature additions and modernization work, Blitzy is the secret weapon for Fortune 500 companies globally. Unlocking 5x engineering velocity and delivering months of engineering work in a matter of days. You can hear directly about Blitzy from other Fortune 500 CTOs on the Modern CTO or CIO Classified podcasts, or meet directly with the Blitzy team by visiting blitzy.com. That's blitzy.com. Schedule a meeting with their AI solutions consultants to discuss enabling an AI-native SDLC in your organization today. AI agents may be revolutionizing software development, but most product teams are still nowhere near clearing their backlogs. Until that changes, if it ever does, designers and marketers need a way to move at the pace of the market without waiting for engineers. That's where Framer comes in. Framer is an enterprise-grade website builder that works like your team's favorite design tool, giving business teams full ownership of your .com. With Framer's AI wireframer and AI workshop features, anyone can create page scaffolding and custom components without code in seconds. And with real-time collaboration, a robust CMS with everything you need for SEO, built-in analytics and A/B testing, 99.99% uptime guarantees, and the ability to publish changes with a single click, it's no wonder that speed, design, and data-obsessed companies like Perplexity, Miro, and Mixpanel run their websites on Framer. Learn how you can get more from your .com from a Framer specialist or get started building for free today at framer.com/cognitive and get 30% off a Framer Pro annual plan. That's framer.com/cognitive for 30% off. Framer.com/cognitive. Rules and restrictions may apply.

(23:24) Alessio:

How do you think about the layers? So you have compute, and we'll talk about that later. On the data side, you build these amazing microscopes. I learned that they're all built for you by spec. They're not off-the-shelf things --

(23:36) Swyx:

Yeah. Yeah.

(23:38) Alessio:

How much of a bottleneck is that still? Can we convert the world of atoms into bits now at the right precision? Or do we need more work on the microscopes themselves too?

(23:51) Mark Zuckerberg:

You're never done. Right.

(23:54) Priscilla Chan:

Well, for here, speed has been a big question of just getting the process through. So here, we've worked on the speed at which we can look at tomograms and the contrast and resolution, and that's where the laser phase plate comes in. So to be able to make the data better and faster to get the data. But it's a bottleneck in so much as there's only -- I don't know the exact number. There are maybe tens of these microscopes in the world. So that's one bottleneck. And I think really, like when I was saying it's slow and then fast, there's so many other dimensions that we don't have yet. The cool thing here is with the transcriptome work, we're looking at cellular expression. And with the imaging work, you're able to localize it in space, and now you want to connect those two. But that's still just two dimensions connected. Time is another dimension. We need to get dynamic imaging in place.

(24:49) Swyx:

Oh god. That's so much --

(24:52) Priscilla Chan:

I --

(24:53) Swyx:

know. Resolution. Yeah.

(24:53) Priscilla Chan:

Right? But really cool biological innovation. We need innovation in the way we can look at things, like stain-free, dye-free. So we can look at things without human intervention, with time as a dimension, because we are not frozen slices. So I think it's just continuously looking at what the next dimension we want to be able to either understand deeply or connect to our existing corpus of data and knowledge.

(25:23) Mark Zuckerberg:

And obviously the ideal would be you want to increasingly be able to image things inside living cells, right? You can kind of simulate it a bit by, okay, you can take a cell out or some culture -- it's destructive. Yeah. It's like, okay, it's living for a little bit or something. But you really want to be able to as much as possible actually understand what's going on in living organisms.

(25:45) Swyx:

Can that be done? What are the approaches?

(25:47) Mark Zuckerberg:

Well, the better it gets.

(25:48) Priscilla Chan:

Well, there's this cool methodology. So there is a really high-intensity X-ray methodology you can use. The organ has to be dead. So you can just shoot X-rays, high-intensity X-rays at like a lung and understand at a sort of molecular level how the lung is assembled. And then you can correlate that with living imagery, right? MRIs of the lungs, CTs of the lungs, and look at the associations between the living images in real patients with the sample that you put into the high-intensity X-ray. So that's another example of correlating data types so that we can get that sort of high-level specificity with clinical data that impacts humans.

(26:34) Mark Zuckerberg:

But at some level, that's sort of the point about building these AI biological models -- you can have a lot of data and you can interpolate on that space and --

(26:44) Priscilla Chan:

Understand that.

(26:45) Mark Zuckerberg:

So one of the models that, again, this is really early work, but the rBio model -- the idea of doing reasoning is that then you don't just get correlation, but you get some understanding of logic over how these things get together too. So yeah, I think it's probably going to be a while and people don't have great hypotheses on how you'd actually do molecular imaging of a cell deep inside a living organism. But the goal is to be able to approximate that as much as possible with this kind of surround view of different things that you can image.

(27:22) Priscilla Chan:

You guys like to see cool stuff. It's not here, but at our San Francisco site we do image see-through fish called zebrafish.

(27:31) Mark Zuckerberg:

Zebrafish. Yeah. That's another good example. Another good model. It's like, what's a good way to image a living thing? Take a see-through thing.

(27:39) Priscilla Chan:

Take a see-through thing. And then use a model to say, how does this see-through thing actually relate to us? Like, I'm not that interested in cure, prevent, manage all disease for zebrafish. I'm very --

(27:52) Mark Zuckerberg:

Speak for yourself.

(27:53) Alessio:

For zebrafish? Yeah.

(27:55) Priscilla Chan:

Mark's pro zebrafish. I'm okay on zebrafish. But you need to use another application of large language models, which is looking at what is conserved and what is actually relevant and important to the way human biology works in a fish model. And so being able to have that translation be more effective so we don't waste our time on things that won't apply in a model organism is another really interesting way to elevate biology.

(28:22) Alessio:

On the data side, can you just give an overview of how far we are? Like, what percentage of all cells have we imaged and do we have? What's the distribution of them? When you say 125 million to 1 billion cells, is that a lot? Is that 10%?

(28:39) Priscilla Chan:

The funny thing is until recently, we didn't know how many cell types --

(28:42) Swyx:

Yeah.

(28:42) Mark Zuckerberg:

This is kind of a --

(28:43) Alessio:

Wild thing.

(28:44) Mark Zuckerberg:

This was a big part of the Cell Atlas project, like, there wasn't even -- it's kind of like imagine the periodic table in chemistry, but it doesn't end.

(28:52) Alessio:

You don't have the squares.

(28:54) Priscilla Chan:

We know it's billions. We know there are billions of cell types in a human. And we've only truly looked at a fraction of them, and we've looked at it in largely healthy cells. And so just the number of permutations of age -- well, species, because not all research is in humans. So species, ancestries, what is your sort of genetic background, age, like babies are different than old people, gender. All those things actually are permutations, environmental exposures. All of those things are permutations on the cell that you want to be able to understand in healthy and disease states. I feel confident that we are at the beginning of this.

(29:37) Swyx:

I'll ask a little bit of an obvious question in terms of the intersection of AI and bio, which is, don't we want precision? In biology, don't we want some grounding in a world model maybe that we don't normally get in a language model?

(29:53) Mark Zuckerberg:

Yeah. I think that's sort of the point of doing all the measurement and being able to have all this real -- so you have the diffusion model for generating cells that we put out. It's one of the recent models. And it's cool because you can basically -- you have a model now that you can describe the conditions and it'll basically give you a synthetic cell. But yeah, you want it to be increasingly grounded, and that's a lot of the point of the biology and the engineering that we're doing, to be able to have these different facets of that. So the Imaging Institute is one part that gets you the spatial data that's very helpful. And the work that we're doing in the other Biohubs on cellular engineering and instrumenting inflammation and things like that -- it's basically scientific work to build new types of tools that allow us to measure new types of things that generate data that allow us to ground the models in different ways. One framing that we have on this that I think is pretty interesting is that there's this concept of a frontier AI lab that is building AI models that are sort of at the frontier of what's possible. And I think you can think about biology in that way too. There's sort of a concept of a frontier biology lab. Like, what is the idea of labs that are at the cutting edge of building the most advanced imaging, measuring inflammation, or doing cellular engineering in the most advanced ways, whatever the problem space is. And then there's this interesting problem space of what happens if you're at the intersection of those two areas. So you mentioned the work that DeepMind did on AlphaFold, which is great. That's an example of a frontier AI lab using a dataset that was just generated by other scientists over decades. But I think part of what we're trying to unlock here with Biohub is the idea of what actually happens if you do frontier biology and frontier AI in sync together, and you're designing the tools on the frontier biology side in order to specifically collect and learn types of data that you then want to feed into specific types of models that you want to build so that it can understand the cells and the body at different types of resolution. I think it's a much more integrated approach that allows designing the things that you need that should eventually get towards more grounding and not just allowing folks who are good at AI to do the best they can with whatever biological data happens to be available.

(32:30) Alessio:

What's the hill climbing in this scenario? So with language models, you have benchmarks. You look at the benchmark. You just make that go better. With these things, you have to bring it back to the real world. So as you build these models, do you bring the two teams together to get feedback?

(32:45) Priscilla Chan:

I think it's very similar to what Mark just said. You want to be able to validate on the accuracy question. We don't expect that these models -- they will get increasingly accurate, but you want to be able to have feedback. And it's not as easy as being like, this output doesn't make sense. You have to actually take it to the wet lab, run the experiment, find out if it actually happened as predicted, and feed it back into the model. And that's the virtuous cycle we want to build to help the AI best serve the biologists and the biologists be part of continuously improving the models.

(33:21) Alessio:

From a numbers perspective, in a language model, you can run tens of thousands of tests.

(33:28) Mark Zuckerberg:

Very fast. Yeah. And we have to build a lot of them out.

(33:31) Alessio:

Yeah. And then on going to the wet lab, what do you think the feedback cycle is going to be like? As you start to have more of these things to be tested in the wet lab, do you feel like that's going to be a bottleneck, like we cannot take that many?

(33:44) Priscilla Chan:

I don't know the answer to that yet. I think the throughput on established metrics in the wet lab is actually getting quite fast. You can run in parallel a lot of experimentation. But it's not easily at the tens of thousands of verifications. We'll have to see. We'll probably need to be smart about how we do it.

(34:09) Mark Zuckerberg:

But a lot of people, I think, often take these things to the extreme and are like, okay, pretty soon if you have these models, you're just going to be able to run experiments with the models without even having to go to a wet lab. No. I think that's sort of the biological version of eventually AI is going to automate every single thing in society. Look, maybe you get there, right? And I think that there's some chance over time. But well before you do, you're going to be able to have models that can help generate hypotheses and scientists can apply their taste on which ideas or suggestions are worth testing. And then you test them and then you feed it back into the model, which I think is basically the way that every AI model is deployed into work in other places.

(34:58) Priscilla Chan:

Right now, because the wet lab is so expensive and relatively slow compared to computational experimentation, people are choosing like, I need something to hit. So people are going for hypotheses or ideas that are, to use a sports analogy, like singles or doubles. It's just too risky. They only have so much grant funding and they need something to help move their work along. But if we have a model that can help de-risk some of the bigger, riskier ideas, that's going to move science faster. And I think it makes the science and those ideas both -- they can be sourced with AI as a tool, but really it's about making the scientist less hesitant to explore big ideas.

(35:47) Swyx:

Yeah. Obviously, that's a lot of the success of the model of CZI, which is serving this part of research that is underserved because there was basically no benefactor or no funding mechanism by which to do this. One thing that we're announcing when we release this podcast is this unification of the Biohub model. I think it's very analogous to the foundation model in the frontier lab approach, where you bring together people of different disciplines. You have much longer time horizons than anyone else. Are there any other key elements to the strategy of the Biohub that you're taking?

(36:20) Mark Zuckerberg:

Well, one thing that we haven't talked about is the Evolutionary Scale team and Alex Rives and his team joining. They're like --

(36:27) Swyx:

Let's talk about the announcement.

(36:28) Mark Zuckerberg:

Yes. This is probably the most talented team working on AI and biology, at the intersection of good biology background and also they've just been working on --

(36:41) Swyx:

ESM3.

(36:42) Mark Zuckerberg:

Yes, some of the top protein models for a long period of time. Yeah, I think if you want to build an organization that is doing frontier biology and frontier AI, you need to have world-leading AI researchers. And we're doing that by basically combining the team that we have that's already put out all the models that we're talking about today, plus having the Evolutionary Scale team, which is just very renowned, join. And Alex is basically going to be running the program. So I think it's an interesting decision, to have the AI person basically be running the overall program, partnering with these leading biologists. I think it gives a sense of how optimistic we are about the AI work being very fundamental to this. But we're very serious about building out a leading lab on the AI side as well. That goes for both the talent and the compute. I think we were probably the first to build out a large-scale compute cluster for biological research. I think now there are some others who are doing it too, but we're also building on that. And we really plan to release frontier models.

(37:53) Nathan Labenz:

Your IT team wastes half their day on repetitive tickets. And the more your business grows, the more requests pile up. Password resets, access requests, onboarding, all pulling them away from meaningful work. With Servo, you can cut help desk tickets by more than 50%. While legacy players are bolting AI onto decades-old systems, Servo was built for AI agents from the ground up. Your IT team describes what they need in plain English and Servo AI generates production-ready automations instantly. Here's the transformation. A manager onboards a new hire. The old process takes hours. Pinging Slack, emailing IT, waiting on approvals. New hires sit around for days. With Servo, the manager asks to onboard someone in Slack and the AI provisions access to everything automatically in seconds with the necessary approvals. IT never touches it. Many companies automate over 50% of tickets immediately after setup and Servo guarantees 50% help desk automation by week 4 of your free pilot. As someone who does AI consulting for a number of different companies, I've seen firsthand how painful manual provisioning can be. It often takes a week or more before I can start actual work. If only the companies I work with were using Servo, I'd be productive from day one. Servo powers the fastest growing companies in the world like Perplexity, Verkada, Mercor, and Clay. So get your team out of the help desk and back to the work they enjoy. Book your free pilot at servo.com/cognitive. That's servo.com/cognitive.

(39:31) Nathan Labenz:

The worst thing about automation is how often it breaks. You build a structured workflow, carefully map every field from step to step, and it works in testing. But when real data hits or something unexpected happens, the whole thing fails. What started as a time saver is now a fire you have to put out. Tasklet is different. It's an AI agent that runs 24/7. Just describe what you want in plain English. Send a daily briefing, triage support emails, or update your CRM. And whatever it is, Tasklet figures out how to make it happen. Tasklet connects to more than 3,000 business tools out of the box, plus any API or MCP server. It can even use a computer to handle anything that can't be done programmatically. Unlike ChatGPT, Tasklet actually does the work for you. And unlike traditional automation software, it just works. No flowcharts, no tedious setup, no knowledge silos where only one person understands how it works. Listen to my full interview with Tasklet founder and CEO Andrew Lee. Try Tasklet for free at tasklet.ai, and use code COGREV to get 50% off your first month of any paid plan. That's code COGREV at tasklet.ai.

(40:43) Alessio:

Do you see that as the 10-year output? Like, in the next 10 years, we look back at that --

(40:49) Priscilla Chan:

They say it's faster than that.

(40:50) Mark Zuckerberg:

But AI people are --

(40:51) Priscilla Chan:

They're --

(40:52) Swyx:

Always --

(40:52) Priscilla Chan:

They are always in a hurry.

(40:54) Alessio:

We have AGI in 2 years. So right. Would that be a satisfactory result for you guys? You fast forward 10 years, you have the three best models in biology, or is there a further goal that you want to have as an output of the foundation?

(41:08) Priscilla Chan:

I have to bring it back to the patient. I think the AI models are -- I think we'll be very excited both if we have great models and scientists are using them, but you really want to make sure that it's accelerating clinical impact. That's the goal, right? The AI models are a very challenging milestone that we are working very hard on and we will get there. But how do you actually take those models and apply them to actually change the way people live? And there are two variants that I think about in the application of these models. Why are they important? One is, each one of our genetics is incredibly diverse and different. First of all, we are all -- the four of us are unique people, but we also have things that are sort of known indicators of disease and unknown indicators of disease. And I actually find the variants of unknown significance to be the most interesting and the most frustrating. Say someone that you love, it's sort of a diagnostic mystery. They need to go in and look at the genetics. Most likely, they'll come back and be like, there are these three things that are not usual, but we also don't know why. And you're like, okay, should I panic? Should I not panic? What do I do now? And what you really want to do, and I think these models will be able to do, is look at those variants and actually model out what is the impact in the different cells, how it influences cellular behavior, and whether or not that is tied to a pathway to disease or not. That's a big deal and I think we should be doing that. That is actually the future of medicine where we think about each one of your biology based on your genetics, your exposure, and how that predisposes you or not to disease. That's huge. And we want to be able to see that clinical application, but we can't. It's too expensive, too hard to model each person. Impossible to model each person in the lab. But if we can build models around this, it is possible. And then we can start thinking with extreme precision. And I'm not just talking about rare disease. Common diseases. I'll just say depression. Right now, it's empirical. We just say, you're depressed. Here, let's try this antidepressant. And it's usually the one that the doctor's more familiar with or maybe one that you've heard of. And then you have to try it for months before it's like, did it work? Did it not work?

(43:36) Swyx:

Months? Yes. That's the cycle. I don't have familiarity with that. Horrible.

(43:41) Priscilla Chan:

And meanwhile, if it doesn't work, it means the person's suffering. And this applies to almost every disease, right? There has to be some biological explanation as to why some medications work and don't. So can we actually then look at each patient and say, based on who you are, we think this medication is going to work best for you. That's the future I want to live in, where we can actually understand individuals as individuals and use the biology and science very directly to keep them well.

(44:12) Swyx:

Yeah. So if there's a name for this tool that has the clinical impact that is on the scale of the electron microscope, how do you envision it? I feel like it's almost going to be the CZI app, I guess.

(44:27) Priscilla Chan:

Oh, well, it won't be -- first of all, that's not what we're building right now. We're building the basics. We're understanding cells and molecules. So I'm painting --

(44:36) Swyx:

Someone else will do it.

(44:37) Priscilla Chan:

We're painting a picture. We need partnerships. You asked about the ecosystem before.

(44:41) Swyx:

Yeah.

(44:42) Priscilla Chan:

There are experts along the way of this pathway, and so we are at the fundamental research side.

(44:48) Swyx:

Yeah.

(44:48) Priscilla Chan:

And you need to be able to partner with folks to bring this all the way through to impact. But the way I think about it, people call it different things, but essentially you want to get to medicine where it's truly precision medicine, it's N-of-1, we're understanding you and designing therapeutics for you.

(45:07) Swyx:

Yeah. I like the mission of "rare as one" as well. That's a great framing.

(45:10) Alessio:

Do you feel like that's possible? Almost treating the body as like a compiler? It's like, because I know exactly what it looks like, I know exactly what's going to happen? Or is the body just -- there's too many outside inputs and over time it kind of deviates from what you have?

(45:26) Mark Zuckerberg:

Well, I think we'll see how far we can get. But I'm pretty optimistic that we'll be able to make a bunch of progress. And yeah, what format does this take technologically? I would imagine you're taking these different types of virtual cell models and eventually merging them into the equivalent of a biological omni model, kind of like how on the language model side you had people that did language and then people who did different kinds of media models and perception and all that. Then eventually you just kind of merge that. And then you aim to get positive transfer by merging it so that way it's not just combining capabilities but getting everything else to be stronger. So yeah, technologically, I think that's basically what it looks like -- over whatever it is, a 5 or 10 year period, we're building up a series of Biohub models that increasingly get all these different dimensions of data and capabilities that can be used to help run individual science experiments and potentially eventually help with finding individual therapies for patients. Although we're going to be less on the clinical side. We're going to be more on the scientific tool development side. And the main tool, if you will, is these Biohub virtual cell models.

(46:44) Priscilla Chan:

I would say five years ago, without sort of the large language model supporting this, I don't think it would have been possible to really -- because biology is incredibly complex. And what we're essentially trying to do is break it down from a discovery-based science where you kind of get lucky, you kind of get clever, and you sort of figure out a hack to learn something new, to really making it closer to an engineering problem of, this is how the system works, and when this breaks, what happens to the rest of the system? But like you said, there's just far too many dimensions for us to hold in our brains. That's why we're so excited about this intersection at this moment because it is possible to consider so many more dimensions matching the complexity of biology.

(47:31) Alessio:

What is the role of the doctor in that future? If you can predict everything out and then if you take personal superintelligence seriously, do you kind of distribute some of the diagnosis and all of that work? Or how do you envision that?

(47:46) Priscilla Chan:

I've been thinking about this a lot. And I think one, the model's not going to take you all the way. You're still going to need to really look at individual clinical situations, and the doctor is going to be a form of data input into the model. And so there's some judgment that comes into place. But there's already a lot of models that make doctors really good at what they do. For instance, looking at your skin -- AI is really, really good at detecting lesions in your skin that are concerning. It's excellent. Retinal issues. It is excellent. So the AI modeling and mapping is really, really good. It's already happening. So I think about, what should future doctors be trained to do? And I really think care and compassion and walking patients through understanding. I think understanding why leads to trust in both the science and in the clinical pathway. And really walking alongside patients on that journey -- it was the original calling of physicians to be healers and to be using great tools to heal patients.

(49:01) Swyx:

Well, so bedside manner ultimately is --

(49:04) Mark Zuckerberg:

I also think you can zoom out though from the role of a doctor to -- I think everyone wants the health system to be more proactive and less reactive. Today, you show up when you're sick and then you have someone treat you or understand what's going on. I think the goal with a lot of these systems is to be much more proactive about this. So when we say that the vision is to try to help scientists cure and prevent all diseases, it doesn't mean that there's going to be no bacteria in the world and no one ever starts to get an infection. It's just that, ideally you can understand all that really early, right? Similarly, if someone gets a mutation that looks like it might become cancerous, then you can just treat it a lot better if you know that early rather than showing up to a doctor when it's already metastasized and you have a bunch of issues. So I think there are going to be a lot of opportunities to fundamentally improve the healthcare system overall. But I agree with everything that you said on this. And I also just think that when we say that we think it's going to be possible to prevent and cure all diseases, it's not literally that no one ever gets the beginning of a sickness. It's just that it can be managed in a way where everything is sort of manageable.

(50:25) Swyx:

I think we discover more diseases the longer we live. Is it possible to not die? Obviously, that's a meme that's coming to fruition. If you theoretically cure all diseases, maybe death is a disease.

(50:39) Priscilla Chan:

Mark just said we had extreme alignment, which I love. Thank you, honey. This is --

(50:45) Mark Zuckerberg:

This is one that we don't necessarily --

(50:46) Priscilla Chan:

I'm not sure we have extreme alignment on. I, in fact, just haven't thought about this one very much. Because I think there is so much --

(50:54) Swyx:

There's other things to do.

(50:55) Priscilla Chan:

There's so much to do in terms of, I'm a pediatrician. I think about babies, and very sad things happen to very small people. And I think a lot about that, and how do we maximize life quality and the things that harm small people. I'm biased. And I haven't thought as much on the other end of the spectrum. But I don't know. I'm 40, maybe I should, but I feel like I can still focus on the little ones.

(51:23) Mark Zuckerberg:

I think the strategy is the same, right? We're basically choosing to not focus on any specific disease and verticalize. Our strategy is one of trying to accelerate scientific progress overall. And I think that there are a lot of people who are going to focus on each of these individual things. So --

(51:40) Alessio:

I don't know.

(51:41) Priscilla Chan:

But we don't have to because that's not our strategy. Our strategy is to make sure that we have tools that make people do the best science possible out there.

(51:48) Swyx:

Now I'll put to you that because aging and environments and mutations are so diverse, you actually have a tight concentration of grouping in the early years and it should have more diversity in terms of the cell types and the problems that you face in the later years. And so there might be some imbalance in terms of where all these things happen. But I'm not pitching it in any particular direction.

(52:15) Mark Zuckerberg:

No. I think clearly, if you look at the trend over the last, I don't know what it is, 100 years -- there was this flip. And if you pay attention to the history of science where it changed to hypothesis-driven scientific method of like, we're going to run tests and have controlled experiments. And since that happened, the average life expectancy has basically increased by, I think it's about a quarter of a year every year over the last hundred years. Now a lot of that, like Priscilla said, is basically making it so that a lot of people don't die young. So far had somewhat less of an impact on extending the maximum human life expectancy. Although the oldest people today, I do think in general, are older than the oldest people 20 or 30 or 40 years ago. But there's been a little bit less of an increase there and more just making it so that people don't suffer and die prematurely. But there's other things that you want to focus on here too. It's not just how long you live. It's the quality of the life while you're living. So I think you can live a full life and have that be high quality. Or you can get sick in different ways that kind of add up over time. And I think there's lots of different ways to improve. There's just a lot of room to improve here.

(53:41) Swyx:

And then the other element I wanted to come back to on the engineering side, which is when you're presented with a high-dimensionality problem, you want to reduce things into little boxes that you can sort of manipulate at a higher abstraction. And that's something I tried to do with the folks outside, and we really struggled because --

(53:57) Priscilla Chan:

Oh, yes.

(53:58) Swyx:

Over here, you're imaging on the atomic level, and then you're also worrying about proteins, and then you're also trying to build a cell model. Is every abstraction leaky? Where are the boxes I can move around and not worry about it? My physics analogy is in the regular world, you don't have to worry about quantum physics. But here we kind of do.

(54:16) Mark Zuckerberg:

I think you want to build it up a little bit hierarchically. And when you're trying to understand proteins, understanding molecules makes a big difference. But at some level, you can kind of just look at correlations in cells. But if you want to really have the most accurate model and if you want to be able to reason about things, then you probably also want to understand proteins well. And then I think that kind of extends. But yeah, that's part of the interesting challenge of this -- it's not just one resolution that you're looking at. I think in order to do it well, you have some amount of abstraction. But I think you want the models, just like language models or how our brains work, to basically build up different levels of abstraction and pattern matching. And I think that's here too, and you basically just need to have some basic excellence and understanding at each of these different levels.

(55:05) Swyx:

It's weird. The number of levels in which you have to telescope up and down, it's mind-boggling. I think when people say dimensions, they typically mean orthogonal dimensions, but here it's sort of nested. And then yeah, just different scales --

(55:20) Mark Zuckerberg:

That are oddly different disciplines to understand each specific scale. And it's like in a way that the people who are good at understanding one scale are like --

(55:30) Priscilla Chan:

Have never spoken to people at the next scale.

(55:32) Swyx:

Yeah. Physics is there, chemistry is here. Bio is there. It's nice to hear about it, but when you see it and you meet the people, you're like, oh, this is real. And they are actually working together.

(55:44) Alessio:

Yeah. And then there's this goal of the virtual immune system that you're working towards. I would love for you to chat about that. And also, if that happens, what should other people build? So there's obviously CRISPR and some of those technologies that people should maybe ramp throughput for. How do you think about the future?

(56:02) Priscilla Chan:

The virtual immune system I think is -- obviously I think of it as a subset of the generalized model we'll eventually get to. But the virtual immune system is super interesting for a couple of reasons. One, it's individual cells interacting with each other. There's a number of cells that we don't even fully understand what they do. B cells, T cells, NK cells. And so we can use our current technologies to understand these cells at a more granular level. So that's cool from a biology standpoint. But the clinical impact is huge of understanding the immune system. Because biology turns out has already given us a way to keep the body healthy. And it also sometimes goes awry and causes disease with autoimmune disease. And so it's a very complex system that has to stay in balance, and if it goes out of balance in either direction, you get sick. It can also go into your body -- it's a privileged system that is mobile and can go into places like your brain, your pancreas, your heart to either do maintenance or to collect signal. That's built in. So if we can understand this system, we can use it to keep people healthy. We already kind of do. So there's CAR T-cells where we reprogram T-cells to go in and fight cancer. And our New York Biohub, we're doing cellular engineering to say, hey, can you go into this person's heart? Check if they have plaques that are causing problems, read it into your DNA, self-lyse, and then we can read out the signal as cell-free DNA and give us a binary answer, yes or no. Then we can put in other engineered cells and imagine where you go in and you clear out the plaques using engineered immune cells that are your own. That is incredible. That is a tool that is realistic too. I know it sounds sci-fi. It is realistic. It is happening. And then on the other end of understanding the balance, so many autoimmune diseases. MS, lupus, those are examples of ones we know. I think there are other things that are autoimmune that we don't understand, like dementia -- autoimmunity can play a large role in that. And so if we can understand the fine balance that the system needs to be kept in, then we can actually impact a lot of the ways the human body is maintained. So I think it's both interesting from a biology perspective and feasible to model, and probably one of the highest-impact systems if we can learn how to manipulate.

(58:39) Mark Zuckerberg:

Amazing. But it's only one system, right? I think it's like the --

(58:42) Priscilla Chan:

So it's a subset.

(58:43) Mark Zuckerberg:

If you're focused on curing and preventing diseases, the immune system is a pretty important one. And I think it's also interesting and unique in a lot of the ways that you said. But there's lots of other parts of the body to understand too.

(58:55) Alessio:

And I think we're running out of time. So we have two questions to close. One, again, 100 years maybe is too long, right? What would it take to do it in 50, in 25? And to make those happen, what should other people build to support your work?

(59:09) Mark Zuckerberg:

I think a lot of this is going to end up coming down to how far a lot of these AI methods get, right? There's just this constant ongoing debate around what are the timeframes for getting to very strong AI. And I think if you get that, then it's pretty optimistic that with the right investments in frontier biology, you should be able to get these systems that can allow you to have virtual cells, that allow you to do the kind of precision treatments and preventative care that can achieve this kind of mission significantly sooner. But at the end of the day, I think a lot of that timeframe will probably come down to the AI timeframe. There's obviously a ton of stuff to do in biology. But it's not -- what should other people do? Other people doing more frontier biology and helping to collect this type of data and solve these problems is super helpful to that too. It doesn't automatically happen. But I guess if we're predicting whether it's going to take 10 or 20 or 40 years, that is probably more a function of the pace of AI development than it is a pace of the pure biology side.

(1:00:19) Priscilla Chan:

Yeah. I was going to agree with you. I think a lot needs to -- I think we're on a path to get a lot of important biological data through advances in laboratory technique, but it's not a given. And there are different groups that are expert at this all across the nation and across the world. And so we need to be continuing to push the research and the methodologies. And I want to say that the Cell Atlas was not glamorous work. People were not going to get their tenure track paper by analyzing the 120 millionth cell. That is just not it, right? And so rethinking the way that this work gets done -- doing big things together in science, that's what is going to need to happen to get the knowledge we need to build models that give us this type of insight.

(1:01:14) Mark Zuckerberg:

I guess one thought on the type of biology that I think should get done is there is a certain orientation around choosing problems that will help generate data that can help make the models a lot smarter. I think you do that when you are very optimistic about the pace of progress and what AI is going to enable. Because the classic reason that scientists generated datasets is so that they could basically look through the datasets to make advances. So it is a little bit of an inversion in the thinking, which is like, I'm now going to do this so I can help train this other thing to be better and create more advances. And I think in a world where you really believe that there's going to be very significant AI progress, I think more frontier biology should be done in that way. But these datasets aren't going to get created by themselves. There's a lot of work that needs to get done and a lot of investment there. And at some level, you could probably have the smartest AI model in the world. But if it doesn't actually have the data to understand this stuff, it's like, okay, you can't just reason from first principles about all these things. A lot of human knowledge comes empirically, not from first principles reasoning. I think that more -- this is kind of the whole Biohub network idea that we're building. And I've been really happy to see other folks, especially a lot of people in technology, I think have this orientation too. They believe a lot in AI. They believe in the technological progress. They've generated some significant wealth from building their companies, and now they're investing in science research. I think that's great. And I think doing it in this way where you're building up these networks to build specific tools that generate data that make the models better. It's one approach. It's not that all science should go in that direction. But it's one of the things that I'm quite optimistic about that I think is going to make a very big difference.

(1:03:10) Swyx:

Cool. It's probably all the time we have, but I'll just leave it to you guys for any calls to action, anything that you want biologists or engineers to check out.

(1:03:19) Mark Zuckerberg:

Check out the models.

(1:03:21) Swyx:

The tooling.

(1:03:22) Mark Zuckerberg:

Yeah. They're early, but I think it's an interesting sense of where things are going and we'd love feedback on it. It'll kind of just help this feedback loop of what we should build next.

(1:03:37) Priscilla Chan:

Yeah. I would say let's do this together. We need lots of people coming together to do this work.

(1:03:43) Swyx:

Thank you for organizing it and solving and curing all diseases.

(1:03:47) Mark Zuckerberg:

Trying to help others do it.

(1:03:48) Swyx:

Yes.

(1:03:50) Mark Zuckerberg:

Alright. Thank you.

(1:03:50) Priscilla Chan:

Thank you.

(1:04:02) Nathan Labenz:

If you're finding value in the show, we'd appreciate it if you take a moment to share it with friends, post online, write a review on Apple Podcasts or Spotify, or just leave us a comment on YouTube. Of course, we always welcome your feedback, guest and topic suggestions, and sponsorship inquiries either via our website, cognitiverevolution.ai, or by DMing me on your favorite social network. The Cognitive Revolution is part of the Turpentine Network, a network of podcasts which is now part of a16z, where experts talk technology, business, economics, geopolitics, culture, and more. We're produced by AI Podcasting. If you're looking for podcast production help for everything from the moment you stop recording to the moment your audience starts listening, check them out and see my endorsement at aipodcast.ing. And thank you to everyone who listens for being part of The Cognitive Revolution.