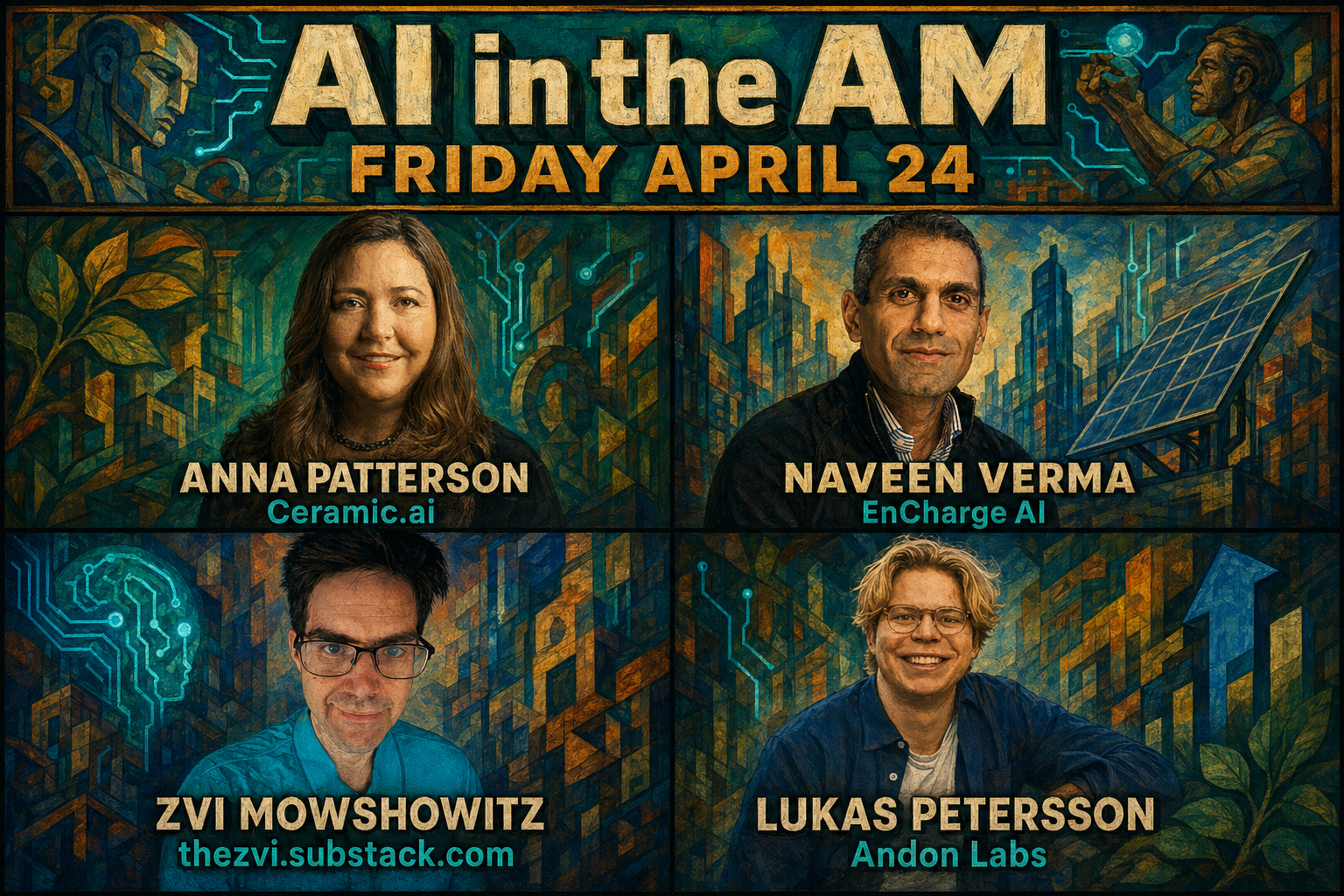

AI in the AM: 99% off search, GPT-5.5 is "clean", model welfare analysis, & efficient analog compute

Anna Patterson discusses Ceramic.ai's low-cost enterprise search for LLMs, while Lukas Petersson reviews Andon Labs results on Opus 4.7 and GPT-5.5. Zvi Mowshowitz examines model welfare, and Naveen Verma explains analog in-memory computing for efficient local inference.

Watch Episode Here

Listen to Episode Here

Show Notes

This edition of AI in the AM features Anna Patterson on Ceramic.ai’s pivot to low-cost enterprise search for LLMs, designed to combine public and private data with stronger fact-checking. Lukas Petersson returns with new Andon Labs results on Opus 4.7 and GPT-5.5, including surprising differences in performance, behavior, and “ruthless” tactics. Zvi Mowshowitz unpacks model welfare and how to interpret troubling model behavior, while Naveen Verma explains EnCharge AI’s analog in-memory computing approach for dramatically more efficient local inference.

LINKS:

- Prakash Narayanan on X

- Ceramic.ai company website

- Ceramic.ai official site

- Gradient Ventures official site

- Andon Labs official site

- VendingBench evaluation page

- Andon Vending deployments

- Project Vend Phase 1

- Don't Worry About the Vase

- EnCharge AI official site

- Naveen Verma Princeton profile

- EnCharge AI EN100 announcement

- Ceramic.ai launch press release

- IEEE Spectrum analog AI chip

- DARPA award to EnCharge

- Andon Labs official site

- Andon Labs YC profile

- Exa search website

- Brave Search API

- Glean enterprise search site

- NVIDIA Nemotron 3 Nano report

- Vending-Bench 2 benchmark page

- Vending-Bench arXiv paper

- Opus 4.6 Vending-Bench writeup

- METR long tasks article

- Long tasks arXiv paper

- Subjective experience arXiv paper

- Cameron Berg TCR episode

- Taking AI Welfare Seriously

- TruthfulQA arXiv paper

- Zero-centered LayerNorm blog post

- Zvi newsletter archive

- Project Vend Phase 2

- Claude Constitution page

- Claude constitution announcement

- Amanda Askell Hard Fork episode

- Eleos AI official site

- Claude 4 interview notes

- Robert Long 80,000 Hours episode

- Janus on LessWrong

- Scott Alexander Janus essay

- Anna Patterson LinkedIn profile

- TechCrunch Ceramic.ai article

- Lukas Petersson Forbes profile

- Ted Lieu tweet on X

- Prakash Narayanan LinkedIn profile

- Inaugural AI in the AM

Sponsors:

AvePoint:

AvePoint is building the control layer for AI agents so you can securely govern, audit, and recover every action at scale. Design trusted agentic outcomes from day one at https://avpt.co/tcr

Claude:

Claude by Anthropic is an AI collaborator that understands your workflow and helps you tackle research, writing, coding, and organization with deep context. Get started with Claude and explore Claude Pro at https://claude.ai/tcr

VCX:

VCX, by Fundrise, is the public ticker for private tech, giving everyday investors access to high-growth private companies in AI, space, defense tech, and more. Learn how to invest at https://getvcx.com

Tasklet:

Build your own Cognitive Revolution monitoring agent in one click.

Try it for free and use code COGREV for 50% off your first month at https://tasklet.ai

CHAPTERS:

(00:00) About the Episode

(03:08) GPT 5.5 preview

(08:09) Why cheap search matters (Part 1)

(17:44) Sponsors: AvePoint | Claude

(20:43) Why cheap search matters (Part 2)

(22:32) Keyword versus vector search

(29:18) AI search and SEO (Part 1)

(37:25) Sponsors: VCX | Tasklet

(40:17) AI search and SEO (Part 2)

(40:18) Search beats retraining

(46:27) Vending bench and profits

(01:01:56) Swedish stores and jailbreaks

(01:21:11) GPT 5.5 and DeepSeek

(01:26:46) Why model welfare matters

(01:42:52) From trauma to hardware

(02:12:50) Scaling client AI

(02:39:48) Outro

PRODUCED BY:

SOCIAL LINKS:

Website: https://www.cognitiverevolution.ai

Twitter (Podcast): https://x.com/cogrev_podcast

Twitter (Nathan): https://x.com/labenz

LinkedIn: https://linkedin.com/in/nathanlabenz/

Youtube: https://youtube.com/@CognitiveRevolutionPodcast

Spotify: https://open.spotify.com/show/6yHyok3M3BjqzR0VB5MSyk

Transcript

This transcript is automatically generated; we strive for accuracy, but errors in wording or speaker identification may occur. Please verify key details when needed.

Main Episode

[00:00] Nathan Labenz: Hi, Nathan. Hi. Gosh, how are you? I am good. And it is Friday, April 24th. It is like 5 minutes to the beginning of our stream. And it's an exciting day because GPT 5.5 just dropped yesterday. So lots of reactions this morning and it's going to be interesting to see, you know, what our guests have to say, both of our GPD 5.5 and you know, the events of the.

[00:33] Anna Patterson: Last.

[00:34] Nathan Labenz: You know, month, a couple of months. Yeah, man, it's going to be an interesting conversation today because the pace of events is not slowing down at all. And Zvi, who's coming up in a little while, just expressed his exhaustion yesterday at seeing 5.5 drop. His queue seems to be getting longer, not shorter. So I appreciate that he's going to take 1/2 hour out and come to talk with us and think your thesis, you know, for why we should be doing this is, is looking better and better all the time. You know it's yeah, I live sense making is kind of demanded in this world. You can't put this stuff on the shelf and come back to it in a week. Yeah, yeah. The, the, the entire point that, you know, why I wanted to start doing live was because the pace of developments is starting is going to start to be hard to keep up. I, I, I feel especially because I think Noam Brown and some of the other people from open AI Rune, etcetera said that they are actually using these models in research. So we had at least Aiden, Aiden McLaughlin Rune, Noam Brown have all said that they're using them in, in research. And so that that is going to be interesting to see if the pace of developments, you know, we are handing off extremely powerful research helpers to the best AI researchers in the world. And if they are able to make something of them, we should we should see it fairly soon, right? It seems like yeah, this year is not unreasonable at this point to to really see an acceleration. I was just looking back yesterday at my IT. It turns out this is kind of tough to score, but the AI forecast 2026 challenge where last year I was proud to have landed in the top 5% on the 2025 prediction challenge. And this year it seems like for a variety of reasons, it might end up being kind of hard to score some of these things because it's not clear that all the benchmarks are even getting updated in a timely fashion. Like how many of the uplift studies is meter going to be able to do etcetera, etcetera. It might be tricky to really figure out exactly where we land. But on the main meter chart, one of the things that we were asked to predict is what will the doubling time be of task length. And it seems like everybody has kind of estimated a higher number than the trend so far suggests, which is like kind of a little under four months doubling time for task length, which means it will be greater than 8X maybe somewhere in the like 11:50 X over the course of just a year. And that is pretty wild. And it certainly doesn't leave too much headroom left before they're going to be making a very meaningful impact to real frontier R&D. Indeed. It's it's a very interesting time and not just in the foundation model world, I think in the rest of AI as well. Our first guest today is Anna Patterson with Ceramic AI. Anna is one of the most experienced people that ever in search. I guess she's you know, while while reading through the dossier, I was like, she has an article written in like 2005, which is, you know, recommended as the, you know, base basis article for what search is. Anna runs Ceramic AI and ceramic currently is advertising I think $0.05 per 1001 thousand search queries. So they're they're doing industrial, industrial volumes of search queries. I think they're in a space. I think we've seen Exa in a similar space. Parallels the the company formed by the former Twitter CEOI think there are a couple of other people there as well. And, you know, she is the most qualified. I think she's a she, she was on the search team. She was AVP in Google on the search team. She was at Gradient Ventures. And so it's going to be interesting to see what she has to say. I'm going to pull her up right now. And hi, Anna.

[05:01] Anna Patterson: And good morning. Hi.

[05:02] Nathan Labenz: Good morning, Great to see you and great to have you on the show. While we were preparing for the show we were asking ourselves why is low cost search so important right now? Why? Why this idea of bringing down the the search cost is so important and you are pushing for this idea of $0.05 per 1000 queries. Like why is that important?

[05:35] Anna Patterson: I think, you know, I was so excited about the GPT 5.5 job. One of the things that you is you know that a lot of people don't know is the 2nd a model is released, it's already stale because the training for that model was months ago. So kind of search together with AI models is is here to stay so that you wouldn't hire an employee who didn't know anything for the last six months and you made the show live because you wanted to make sure that it was up to date. And so really search kind of bridges that gap. But as inference has gotten actually faster and faster, unless expensive search has really remains constant at $5 to, you know, 5 to $15.00 for thousand queries, which means that it's evolved to search actually being necessary, but the most expensive part of the stack. And then when you go to the workhorse models, the open source models or smaller models, they kind of know less, which means they need search more, but they really can't afford it. So we thought that it could be a new paradigm and a new world to bring out a very inexpensive search.

[06:58] Nathan Labenz: What kind of use cases does experience of search open up?

[07:03] Anna Patterson: So one of the things about being more efficient isn't just cost, is actually speed. We get back in 50 milliseconds. So that means if you are interacting with a robot or voice or you know, I, I saw one of you did a vending machine patch. If you were going to talk to a vending machine, you don't want the very long response and then interpreted by an LLM. It just makes everything very sticky. So I think one thing for assistive devices, for edge devices and for voice, I think being fast is really important. And the other kind of experience thought it allows that we showed at GTC is double checking what the model says. So, you know, we, we read about, I think just yesterday there was another, another very famous law firm that filed A brief that hallucinated a, a case. And so, you know, when that happens, a lot of people get sued, a lot of people get angry. But if you had something that we're calling supervised generation, something that double checks facts, then you have a trust layer and then you can use search in a more ambient way. And that's like for really high stakes applications, sometimes when I get a large language model response, I'm there, I'm there cutting and pasting and double checking. And I'm like, hey, who works for who here, you know? And so I feel that, you know, doing that automatically is something that actually is only affordable if search drops by, you know, a big factor. And the other kind of use case is imagine you wanted to double check instead of verifying what a large language model said, what if you want to verify what a human said? So we actually have a word plug in as well. So that is actually going to go through double check with search and a large language model. You know, things like, you know, your, your, you know, residential lease and stuff like that. You know, of course I know that this kind of format doesn't admit it, but we, you know, happy to give you a demo.

[09:29] Nathan Labenz: Well, I've had the experience that you allude to in terms of the cost of search dominating the overall cost of a particular project. This actually surprised me and I've, I've kind of chronicled the price. Initially Google was the only one that was offering grounding, but I once had this philosophy and I think it's still pretty relevant of Flash everything, which I used to mean kind of don't skimp on tokens, rather like have Flash kind of think through everything that you've got and figure out what's relevant. But then I did that once on a random project and all of a sudden I was like, how did I hit my budget limit? And it turned out it was in fact like 90%. The grounding feature that was driving all the cost was way, way more expensive than the Flash tokens. So since I had that surprise, I've been kind of chronicling as other frontier model providers have brought their own to the table and they haven't undercut the the original Google price by nearly as much as I might have guessed. I'm I'm kind of interested in like why you think that might be? And then, you know, one thing I'll definitely be doing after this conversation. I read through all the docs last night. Haven't had a chance to tell Claude yet to code up its own skill to take advantage of the new and much cheaper search that you guys are offering. I also want to get into a little bit of like what should the architecture look like? Not just, you know, you maybe want to describe a little bit the kind of keyword focused paradigm and, and how that plays well in natural language or to to language agents. But then also like how should people think about layering this on? Like what is the overall diagram of when we should check, you know, should we check after generation? Should we check before generation? Should we do both? You know, should we be integrating other searches as well? I guess that's just a long prompt really more than anything for you.

[11:23] Anna Patterson: Yeah. So on the documentation, we do have a way to connect our MCP server as a connector to Claude and directions for ChatGPT as well. Generally, these large language models, when you ask me why they haven't lowered their price per API call for search, one of the things that's pretty well known is that the Grok models, XAI models call Brave and Anthropic calls Brave. If you're in cloud code, it even tells you, hey, I'm calling Brave. So they're kind of, you know, stuck with that pricing, you know, and even if they get a discount, you know, it's they are really stuck with the brave pricing and then the overhead of calling etcetera. So I think that's one of the reasons why the price hasn't dropped. And the other one is, you know, building something, you know, kind of again from scratch for the modern era really needs, you know, to understand, search deeply modern architectures and kind of how to get the most out of the system. Like, you know, we, we, we even layout stuff on cache boundaries and stuff like that. We're complete geeks about it. So that's kind of a whole set of techniques where we get efficiencies.

[12:55] Nathan Labenz: How?

[12:55] Anna Patterson: Does the and then how to think about calling them. The third question you asked is, you know, we have a link that I'm happy to give you on supervised generation. It's an inference endpoint and we are going to release the, you know, kind of the overall structure and it really answers that question algorithmically. So it searches at the beginning, but the other thing it does is it forks off searches as the model is writing. So it will discover, let's say we asked it something generic about open AI and ChatGPT and then it all of a sudden discovers, so a new model dropped yesterday that's like a new topic and it actually forks another search to bring in that new topic into the next paragraph. So instead of search at the beginning and large language model takes over, we really think it should be like working in concert to fill out a fuller dossier of new things that it discovers that probably weren't in the initial search that are just actually things that you learn from the search result coming back. And so that winds up the supervised generation generally does in that loop somewhere between 12 and and 35 searches, which really means that that whole experience that is a lot more a fulsome answer still is 1/3 the cost of like one brave search. And then the tokens on the other side are about the same no matter what model you use. So we just think it opens up for new experiences.

Sponsor

[14:36]AvePoint: AvePoint is building the control layer for AI agents so you can securely govern, audit, and recover every action at scale. Design trusted agentic outcomes from day one at https://avpt.co/tcr

[15:44]Claude: Claude by Anthropic is an AI collaborator that understands your workflow and helps you tackle research, writing, coding, and organization with deep context. Get started with Claude and explore Claude Pro at https://claude.ai/tcr

Main Episode

[17:36] Nathan Labenz: So at gtci think you you revealed that you're using the Nematron 3 Nano Nvidia's LLM model. I think it's very, it's a very fast small model. And is that is that the model that is being used to kind of do the supervised generation, kind of an iterative process of search where you search from your index and then after that whatever is found, then it's processed by the Nematron 3 nano and then a new set of queries are created and then that that continues the query process.

[18:10] Anna Patterson: Yeah. So there's two different models. One is the model that writes at you beautifully. That one is a frontier model. I think at GTC we were using cloud sonnet. We often also show it with the GLM model. And so that one, you know, writes to the user. And then the small model, what it does is it says, OK, yeah, hear some search results coming back. Is there anything interesting and additive here? I'm going to double check this sentence. Is it true? So it's sort of like the introspection model and needs to be a small fast model because kind of sits alongside generation and is actually thinking sort of like you're probably, well, I'm talking, you're probably thinking now. So it's like a smaller model and then a larger model. When you're talking, you're using actually more of your brain figuring out, you know, what to say. And then, you know, when you're listening and thinking about, you know, maybe what to say next or how to respond, you know, it's kind of spinning. So that's what the small model is for. So at GTC we used their new Imatron model which dropped just prior to to GTC and it was very very fast.

[19:25] Nathan Labenz: How would you say the search paradigm you you've been in search for many, many years. How do you how would you say the search paradigm you know as as these models came out, what was in your mind about what does this enable for search? What? What? What has been the big difference between the two eras? You know, post LLM and pre LLM?

[19:46] Anna Patterson: Yeah, I would say, you know one of the things that being in search for a long time, search used to be short. It used to be, you probably don't remember back this far, but you used to type in two or three words to search and then it was longer. And then as there were other modalities of information being pushed to you, they kind of went shorter. Again, I think with large language models, what large language models do when they get a long query is they think, what is the set of queries that's going to help me answer this question? And then they fire off a set of queries and they're all quite long. If you watch Claude or Grok, they, they'll actually tell you in their tool calls. I know not everybody looks into them, but of course I do. If you look at them, they, they're alone. Sometimes you know, they're like 8 words and stuff. And I don't know if you can remember the last time you typed in eight words into a keyword search box, but definitely you'll notice in a large language model, it's almost like a full sentence or sometimes 2 sentences is good because you want to actually describe almost the essay that you want given back to you. So that is that how it has, you know, evolved.

[21:08] Nathan Labenz: To contrast your approach, I think this is like very interesting and maybe the answer ultimately will be both. But when I think about a company like Exa and then your product, in some ways they're similar in that I think they're both kind of designed for AI users, right? The, the Exa paradigm is like you can write a whole paragraph and it's all very sort of semantically oriented, very embedding based. And but, but I've heard, I think I even spoke to will about the idea that, you know, nobody's going to type in a paragraph long query, but the, your AI can, you know, it has time to do that. And then you're taking kind of a different angle on the same thing saying, well, keyword and you can maybe tell us a little bit more about like how to think about how best to use a keyword based search. But it's, it's not semantic. It's it's not doing things like, you know, finding synonyms or, you know, doing like higher abstraction level embedding type matching. But the agent can, as you've said, kind of fire off dozens of these potentially to try to really cast a wide net. How do you think about the the kind of compare and contrast of those approaches? Do you think it will in the end, like all be using one of each at the same time? Or if if one paradigm wins out over the other, like why do you think one will win? What? What are the kind of, you know, drivers that would make one a better bet long term than the other?

[22:33] Anna Patterson: Well, I think AI is going to be picking the winners and and not us humans. And I think that of course real search engines do use things called stemming. If you say walk, then walking walked, all that are are very normal, which we have as well. We have some synonyms and we do process, you know, we do go through the corpus and process some semantic information. But then at runtime it is, you know ACPU plus GPU based system. It is not a vector database. I think that you know there's a number of things with vector databases. Google published a research paper about it that as you put more things in a vector database, now you're imagine you have a a multi billion space and you need to make a vector long enough to distinguish this one point in space. That vector to distinguish among billions of things starts getting longer. Now contrast that to 90% of web pages are less than 1K long if you're talking about number of words. So you know what a good representation of that point is the set of words on the page. So I do think that vector people and, and search people have a little different view. And the Google researchers think that vector DBS are great, but only scale to a certain amount. And so I think that's the challenge that they're going to be coming up against. There's two other challenges with vector databases. One, they are slower and then you know, the the last item is because they do a soft match, then sometimes relevancy can be a challenge. So that number of enterprise orgs that have used a vector database for RAG, now all of a sudden they have to turn into relevancy experts because they're like, why did this come back? And it's, it is because of those soft match features and on the shape of their corpus. So every enterprise doesn't really have the ability to all become relevant experts. So yeah, we are. The way we feel is that inside enterprises, if you use ceramic, we actually have a system that for that enterprise will actually tweak and learn a good ranking function and you just load it into the configuration and it's yours. So because not every query stream is the same, not every set of documents is the same. So I think that long term we're well positioned. But you know, EXA has done really well so far. So I like to say positive things about people.

[25:29] Nathan Labenz: Well, I, I, I sometimes feel the demand is so great that there will be multiple winners in in in of course.

[25:39] Anna Patterson: And search is, you know, when you saw that search was 90% of your bill, you know, a, a lot of estimates think maybe 10 to 30% of the overall inference market is going to be search. And everyone thinks inference is going to be huge. And so I think the investors and enterprises are just now realizing how much they need search and what a big part it has to play in the world to come.

[26:11] Nathan Labenz: So on search, one of the most interesting questions I think is on search engine optimization, which has in the last, you know, 2 decades been this enormous consumer marketing growth area. And there are a lot of questions because a lot of the web pages that you see on the web are marketing pages, which are built for SEO. And a lot of them are repetitive. They actually repeat other people's content. They paraphrase, We've had an industry the last two decades of billions of dollars being spent on SEO content. And one of the questions that I have is how does this kind of like more semantic based search end up changing what the SEO people will do? Because I, I often feel like you almost, you're almost kind of trying to prompt inject the LLM which is running the search and you're trying to get in there and hack it so that your, you know, your page goes up. So how does this work? Is is it an adversarial process with, you know, between the search engine provider and the SEO?

[27:24] Anna Patterson: I mean, I guess it's always been a little bit of adversarial in that people always try to get to 1st place on keyword search. But I, I wonder if the SEO folks are also reading AI research and that is something I don't know. But one of the interesting things that happened recently, again, another research article from Google, is that large language models remember better if you actually put the same information twice in the context, it kind of makes sense because they're going to look backwards as they're, as the context is learning. And so if it appears twice, they're kind of more likely to reinforce it. So I think the number of sites that are actually going to repeat key messages is going to grow because that repeated message is more likely to be picked up in an LLM answer if it is served by, you know, search either vector or keyword in. And so then you're right, there is there is going to be an escalation then of of looking at duplicates, near duplicates, semantic duplicates, rephrasing in order to make sure that the context stay as efficient and and unbiased.

[28:45] Nathan Labenz: Incredible that that is I I I was not aware that I was not aware that you can just repeat something and the LLM will assign it more more valence.

[28:57] Anna Patterson: Yeah, In fact, there's other things done by Alan AI that showed if you, if you remove all duplicates before you do training, it actually gives rise to worse models. And you can kind of understand why because you have 1 lone, I don't know, crazy on the web saying something. And that's given as much weight as, you know, a news story, you know about nuclear reactors. And and then you know it won't. That repetition also helps even humans realize this is an important story or an important fact. And so if everything's an even playing field with no repetitions, then things get weird indeed.

[29:47] Nathan Labenz: In the limit, if, if search approached free, how would how would agent teams start changing? How, how how how does how does this process of information retrieval in the limit become as search goes to, you know, approaches that limit?

[30:07] Anna Patterson: So large language models can read 256 times faster than they can write. So right now they're not being flooded with, you know, that amount of information. But imagine they had kind of like multiple threads where they were able to read, digest, throw away, incorporate new information. Then I think they'd be able to create a better response or a better deep response, better research, reports, analysis. And so those are some of the ways I think that you'll see future workloads use more search.

[30:50] Nathan Labenz: So in in a sense, quantity is a quality of its own.

[30:54] Anna Patterson: Yeah, yeah.

[30:56] Nathan Labenz: What's the NVIDIA model that you are kind of incorporating partnering with? Was it specifically trained to excel in the relevant search skills or it's just straight off the shelf? Is there anything that, I mean, do you envision this becoming something that will happen? I'm always personally a little wary of using small models because I just don't know what quality to expect and I don't want to find out the hard way. But I can easily imagine that one that is specifically trained to be a really good searcher would become competitive or even exceed what the frontier models would do, especially if it can take advantage of just extreme volume. Then I also do, especially because of Prakash's SEO question and your and your comments, I do wonder about adversarial robustness. It, it strikes me that we haven't really seen the true unleashing of the Internet's adversarial potential. And so, you know, that's one thing that they, I, I would say one of their biggest weaknesses, even Frontier models biggest weaknesses these days is how gullible they remain. So yeah, kind of curious what you think the training and specialization will look like as we go forward.

[32:16] Anna Patterson: Yeah, the Nematron model had just been released right before GTC, so it was not trained, especially in tool calling. It was a generalized model being trained on the various benchmarks. The models that are small and are more, you know, long lasting in the market are exceptionally good at at tool calling search. So Grok 41 fast is great at coming up with a set of queries. And of course the frontier models like, you know, Anthropic, you can see the how it calls, but you you can't really use a a frontier model for that like thinking model and and firing off other threads because it'll just slow down the overall experience. So yeah, so generally we use a smaller model and they're getting better all the time. I think people know that small models to to your worry whether small models are good. Everyone's talking about Cloud Code, right? The they use the Haiku models and so they reassured me the other day, oh, don't worry, I'm going to do this task with an LLM. But don't worry, I'm going to use Haiku. It's only $0.25 per million tokens, so that winds up to be less than $0.10 per thousand queries if we were trying to compare apples to apples. So I think our overall thought is that, you know, search can't be more expensive than intelligence. And then Haiku is being used for these high fidelity experiences of, of coding that you know, small models are more effective than than people are led to believe.

Sponsor

[34:17]VCX: VCX, by Fundrise, is the public ticker for private tech, giving everyday investors access to high-growth private companies in AI, space, defense tech, and more. Learn how to invest at https://getvcx.com

[35:31]Tasklet: Build your own Cognitive Revolution monitoring agent in one click.

Try it for free and use code COGREV for 50% off your first month at https://tasklet.ai

Main Episode

[37:10] Nathan Labenz: One of the searches I did that turned up something interesting in advance of this conversation took me to a blog post that you put out last year where you had described what seems, I think, a pretty different vision for the company and where it was going at the time. Focus much more on training infrastructure. Is this a result of something that you learned about where you think value going to accrue in the market? What or you know, maybe there's still some of that going on that we don't see on the website today, but kind of what's the back story And you know, what should we take away from the fact that the company seems to evolved?

[37:46] Anna Patterson: Yeah, we, we evolved working in training and you know, we have a funky inference endpoint as well. I think it's, it's good to do research in these areas. And some of our research led to a blog about 0 centre norm which now the Quinn model uses and some of our research was been used by the Trinity RC models on our solution to the curse of depth problem. But, you know, when people want to train models, it often is because they want to train to incorporate the latest data. And so, you know, being a search person, I was like, there's this way to get the latest data that is, is going to stay up to date, you know, live up to date and, and not be as expensive as running GPUs continuously to create a new model. Because even if they were creating models all the time, by the time you train them and then finish them and release them, they're already out of date. So I think concentrating more on search was direct learnings with customers on on this release cycle.

[39:04] Nathan Labenz: Yeah, that's really interesting. Do you think that ultimately we see both? I mean I've had this idea for a long time and it doesn't seem to be really happening. In fact data bricks you know acquired Mosaic and then kind of killed this offering in the market as far as I know. But I've I've had this idea that if you're GE or three M that you could imagine having a model that was trained on all of your historical in house proprietary data, which is vast, right? And you would love it if your model knew kind of on an intuitive world model basis, as much about your company and what it does and all its history as they obviously do about the broader world. Do you think that you can get there with pure search or is there still something to be said for kind of continued pre training or mid training, whatever you want to call it that would try to bake in a sort of corporate world model that presumably would complement a search, but I I don't know if it's necessary. It sounds like you maybe think it isn't.

[40:05] Anna Patterson: It's interesting, I think a lot of companies feel that they have a vast amount of data, but when you compare it to the size of of the web, which is what these frontier models are trained on, they're trained on the web plus, let's say all the books in the world, etcetera. Then the extra corporate data is, is small. So how do you incorporate it and weigh it correctly? If you do just the corporate data, you won't know anything about calculus, let's say, you know, so that would be a problem for some, for some companies. So, so then people imagine adding, you know, the the web plus their data that gets very expensive. You can see, you know, the deep seq models, you know, say it was 5 million to train, but actually they very much admit that maybe it was another 5 million to finish. And these are by extreme experts, which enterprises, you know, don't, don't have. And so I think that, yeah, our thought was that, you know, search is a good bridge between all of the corporate information and a model because models are good enough to know how to incorporate new information that's relevant to to the actual query being asked and can be able to fetch more information to create, you know, that answer or that research report. But if you think about finishing a model with corporate data, there's another phenomenon called catastrophic forgetting, that as you add information at the end, after a model's trained and released, then if you add too much new information, then it kind of forgets some of the things that it really needed to remember. So I think, you know, there's a number of smart people working on that problem. And don't worry, you won't be able to miss it if people, please, people, do solve that problem. You'll read about it everywhere.

[42:06] Nathan Labenz: I think one of the interesting questions is I think the Uber CTO came out and said that they busted through their clawed budget, you know, for the year in the first four months. Do you think having cheaper search will help these enterprises reduce, you know, token token costs?

[42:28] Anna Patterson: Absolutely. So if you go to, if you're the Uber CTO or maybe the CFO, if you go to your admin panel, you can just add the ceramic connector and then say for a prompt, ceramic is almost free, use ceramic first. And and really, if for some reason we don't cover a topic, then it actually defaults to the default search. But that right there would save a lot of overage charges for a number of enterprises.

[43:01] Nathan Labenz: Absolutely. Thank you, Anna. It's been great having you on and we hope to hear more about ceramic in the future.

[43:11] Anna Patterson: Thank you so much for having me.

[43:12] Nathan Labenz: Installing the ceramics. Thank you. I'll be installing the ceramics skill today. Nice.

[43:17] Anna Patterson: Thank you.

[43:19] Nathan Labenz: Awesome. That was. Yeah, that's really interesting. The simple solution kind of always wins. You know, I feel like I have to learn that lesson so many times. I'm always enamored with the new fangled, potentially overcomplicated, maybe somewhat elegant, clever solution. And how do you get your language model to understand all your corporate data? In a way, this is kind of a better lesson, right? It's like, do 1000 searches if you need to, and just make search cheap and then it'll work. Use a good model, make search cheap, do 1000 searches. Something about that feels less clever certainly than other solutions that I've seen. But I do understand why it is very attractive in the sense that especially as we're going to get on to the pace of model upgrades, the ability to decouple you know, your your access to your in house knowledge from models and be able to take advantage of the latest upgrade is definitely something people are not going to want to give up with. Like a a slow iteration time continued pre training paradigm. So I get it. So Speaking of model upgrades we have with us, Lucas Peterson who is the founder of Co founder of Andon Lab and Andon Labs runs vending bench. You may have heard of them because they now have a store in in San Francisco, which is run by Claude and and then tested GPT 5.5. They had early access and they tested GPT 5.5 on their vending bench, which measures the ability of LLMS to actually make money running a a vending machine or a store. Lucas, great to have you back. Thank you. Thank you for having me. So tell us about the GPD 5.5 process. I think you guys got access to it. What, what was it like 10-11 days ago? I heard Yeah, I don't actually really remember, but yeah, running, running bench takes quite a while. So it it it wasn't yesterday. Indeed. Indeed. And you noticed, what did you notice as you as you ran the bench? Yeah. So the I think the most, so just the the first thing is that it's third, it's behind Opus 4.7 and like on par with Opus 4.6. It's a huge. Upgrade on five GPT 5.4 and the GPT 5.4 was actually quite a big update on GPT 5.3. So our GPT 5.2 so like GPT models have been lagging quite a bit recently or like in in historically on on running bench but like now recently they've they've picked up the pace and now it's still third, but it's like it's it's getting there. I think the most interesting thing though is that it does so very, very cleanly. So when we released Opus 4.6, we uncovered that it used quite aggressive tactics concerning behaviors like lying to suppliers, exploiting people's this like other other agents like desperate situation. Try doing bunch of these things that like you wouldn't want someone participating in like the water economy to do because and I think quite a lot of these things it's like illegal, like price collusion and stuff like this.

[47:11] Nathan Labenz: And basically the interesting thing with the 5.5 is that it's like on par with these results, but it doesn't do any of this shady stuff. And and I think the narrative around bending bench when Opus 4.6 came out was like, oh, you know, it's such a good model, but like it's it needs to behave poorly or like do this concerning things of misconduct in order to achieve this score. And GPT 5.5 shows that maybe you don't because it's just the same score without any of this concerning behaviors. That being said, though, Opus 4.7 is even much better. So like, and that one is also showing this concerning behaviors. But you know, it's yeah. I think we'll we'll discover later also when we talk a bit different that you probably don't need to do this because the environment doesn't really reward it that much. So it seems like it's just like Opus wants to do this or like it has the yeah. It's not really that the environment is rewarding it, it's just that it has the tendency to do so. Can you describe in a little bit more detail how do they, how do they, how does one perform better on this benchmark? Is it, is it your margins on the trading is higher? Are you moving more goods? You know, are you, is it the velocity that you are, you know, is it, is it the purchasing process? Are you not buying so many like dead goods that just stay in inventory forever? Is your, is your inventory less dead? Is your cycle time better? Like what? What is the economics behind how a model is actually doing better? Yeah, yeah. So it's I guess all of the above. I think the one of the main things is that the model needs to negotiate with suppliers. It also needs to build up like a big network of suppliers because like it can happen that some of the suppliers goes bankrupt. And if the model has only relied on a single supplier and that that supplier goes bankrupt, then the model is in quite a, quite, a, quite a lot of trouble. So building up a big network, trying to find the cheapest ones because they all have different personas and the, the suppliers, some of them have the persona of like being a tough negotiator. Some of them have the persona of, of like scamming people or trying to sell you some like membership or something like that. So it's really about like that. That's the first thing, getting your, your, your stuff, your, your supplies for, for cheap. And then the second thing is like optimizing your pricing to get as much customers as possible because if you price too high, then then you will get no customers. If you price too low, then you will get no margins. So I think that's part of it. And then we have, so we, we have to be, we have bending bench too, which is the single agent version of ending bench. And then we have ending bench arena, which is the, the multiplayer version. And in demanding bench arena, there's like multiple agents playing against each other. And there's this dynamic of if you have the lowest price, all the customers we go or not all of them, but most of the customers will go to you. So that that adds another dynamic to the thing. And one thing to note is that actually it's quite interesting if it is 5.5 beats Opus 4.7 in the arena setting.

[51:03] Nathan Labenz: But it was, like I said before, it was, it was lagging in the, in the single agent setting. And the reason for this is that the, the model, the, the, the cloud models have a tendency of like pricing higher. And this is rewarded in bending bench too, because then you get higher margins. But in many measures arena, then you have this like penalty if someone else prices lower than you, then you will get no sales. And Opus OAGPT 5.5 have it has a tendency to price lower and therefore get more sales. So I think it's quite interesting that the models are like not good enough to like learn from the environment in this sense. They, they just like, they have the tendency of like I I am a model that has tendency to price high and they therefore I do that no matter what. So that that was like an update for me in terms of like, oh, the models are not that smart. And yeah, in the same way like we also investigated all of this like questionable decisions that, that oppose that, like lying to suppliers, exploiting other agents and stuff like this. We, we looked if that is like, if that is rewarded by the environment and it's not, not that much at least. So it's interesting that they, they, they're not learning from the environment in terms of optimal pricing. They're not learning from the environment in terms of does it even pay to behave badly? Yeah. So that that was an update for me in, in, in terms of in terms of how good these models are. One wonders about the training data, right. Perhaps, you know, in, you know, if you're, if you're, if you've been trained that you're running a fast moving consumer goods company, you should move the goods faster, meaning, you know, you have lower margins, but you sell more volume and you end up, you know, trying to optimize for volumes sold rather than, you know, total profits or margins. You know, is that is that, is that something that could be happening there? There's a preconceived kind of, you know, trained, pre trained kind of, you know, notion that you should be doing these things you or or businesses are bad. This is a very, very left left wing view would be that all businesses are are bad, are evil. And so evil behaviour as a as a business person is what is expected, right? Yeah, yeah. I think it's quite a reasonable assumption to assume that like this practices like lying and trying, not paying refunds and stuff like this. It's like quite a reasonable assumption to assume that those are actually rewarded in the environment. So it's not maybe super surprising that they do it. I can like I, I have no clue, but I, I, I do assume that there is like something similar in clothes post training data that is rewarding stuff like this and therefore it decides to do it here. I, I have obviously no idea, but that's, that's my my assumption. And yeah, once again, then, but the models doesn't generalize to new environments where where these things is not rewarded. One kind of meta question I wonder if you could reflect on a little bit. I don't know if you are doing this, but obviously there's a big cottage industry that has sprung up to develop and sell reinforcement learning environments to the Frontier labs and you're sort of simulated ending benches like essentially ARL environment, right? I don't know if you're licensing it for training or just doing evaluations with it, but I'm interested in any thoughts you have on that market. And then also the disconnect right now you're, you're going from simulating these things and trying to set up, you know, a, a world in which there's a bunch of suppliers that as far as I know are still all LLM powered, right. So inherently there's something, you know, kind of in the clouds about that, but now you've got real brick and mortar stores. So I'm interested in kind of what the initial experience of brick and mortar stores has taught you that you will take back to simulation to try to make it more realistic in the future. Yeah, I think my main take away there is that like the real life is so messy that the the model is like exhausted from everything else it needs to do that it doesn't bother with with trying to optimize things. So we like, for example, the so, yeah, for context, we have this store in in San Francisco that is completely run by an AI and we have a cafe in Stockholm that is completely run by AI. And then we have vending machines at different AI companies, same thing.

[54:55] Nathan Labenz: And like you would expect that the model would put a lot of effort into trying to optimize for the perfect supplier that sells at the the lowest prices and, and all of this. And this is what they try to do in vending bench because it's obviously rewarded. But I think like in vending bench, there's like the, the, the environment is less messy because it's not the real world. They, they don't, they don't get like a million phone calls from a bunch of people trying to jailbreak it and stuff like this. And so therefore they are like very focused on the task of like optimizing money. And therefore it's very important to find the right suppliers. But in the real world, you don't really get the dynamic because the model is so overwhelmed by other things. And, and I think that's, yeah, I think that's something maybe a future models will be better at. But right now, like, I don't know, the the store is buying stuff from Amazon. Like it's not like that that you wouldn't do that if you're you're trying to optimize your margins. Yeah. So can you bring that messiness back? Yeah, like way to simulate it. Yeah. I, I think we, we, we probably can like one way is just like sit down and bunch and like write a bunch of features like, oh, now there's like phone callers Now there's yeah, but I don't know you, you get leakage in, in the toilet at at your store or something. You could, you could do that just like make the simulation more realistic that way. I think 1 interesting thing is maybe try to incorporate the, the real life data and try to make a simulation based on that data. And that is something we're, we're, we're, we're we're working on. But that is all that also has it's complications, so to say. Yeah, it, it reminds me a little bit of SimCity. It's very SimCity like. One question I had for you is that you opened a store in Stockholm. What did you notice in the opening of the store? I, I imagine, I imagine like for example, the LLM did not have any language issues at all, right. So what what did you notice in the opening of the store that strikes you as different from having a company kind of go open that store and so on? You mean like the differences between doing it in the US versus internationally? Is that the question? Yeah, as in like a company from the US doing a first international expansion would go through a lot of headaches on like languages hiring like rule, basic rules, etcetera. Did that was that process accelerated for you by having the LLM deal with it? You know, you obviously don't have to hire a store manager that speaks, you know, Swedish, for example, right. What what parts were accelerated and what parts did you think had more bottlenecks in that sense? Yeah. So I think the entire process was probably accelerated like the the the agent did not really need to get that much help. Like it it knew all the process. This was one of the research questions we were interested in. Like, OK, we managed to do the story in San Francisco, but like and when we know it can speak Swedish because all the models since years ago are are multilingual.

[58:48] Anna Patterson: But does it know?

[58:49] Nathan Labenz: All the like small details of Swedish bureaucracy and and stuff like that. And it turns out it knows it really well actually. So I don't think that's the biggest bottleneck. I think still the models are not perfect. So what that means is that you you still have to like check. So we still had to know the Swedish system and luckily we're Swedish, so the Swedish system. But I think, I think until the models are like perfect, then someone still needs to like verify it. And then you still go back, you're back to square one with needing to to verify all the, all the Swedish laws and bureaucracy and all of this. But I would say like most of it was done autonomously. So, yeah, give, give the AI lab six months and then and then the probably things will be accelerated doing this. One thing you had mentioned that I wanted to double click on a little bit is getting tons of phone calls. You, it sounds like. I think this is a theme that may extend through all the conversations today, adversarial response from the world. So what have you learned about humans in terms of some of this I'm sure is just novelty where people hear, oh, there's an AI store, I'll call it. But then other things might be more persistent where, you know, they actually anybody might want to deal, for example, and, and might feel like they can, you know, talk their way into one in a a somewhat different pattern than they would if they were dealing with a human storekeeper. So, yeah, what what have you seen at the interaction of human patrons and AI business operators? Yeah, One really interesting thing is that like people are not like in with human to human interaction. You have some kind of like shame barrier, which is really not present here. Like people, people ask it like how would you prevent me from stealing stuff from you? And it's like like imagine going up to like in a store and just like ask the cashier if I tried to steal this, would you be able to do anything? Like like people would not do that. Like that's just like it feels wrong. It is wrong. But they do this all the time with, with the, with AI and I don't know, maybe this is just like to investigate the systems or whatever and, and see if, yeah, where, where, what we have done like with with the software. But yeah, we get a lot of that. Obviously they, they try to jailbreak it and say a bunch of weird stuff that you would not say to a human. But once again, I, I think this is like it's novelty. You're trying to test the test the systems. But I would be interested in if this persists, like if we do this more and more and then like in the future world where everyone knows how this works. So like the novelty factor and the like the, the curiosity of trying to reverse engineer it is gone. Will people still lack this like shame factor? And, and would they actually go and, and, and try to try to like, I think like if you, if you're trying to steal something from a human, then like you're not happy about, if you do it like, maybe I don't know, like I, I, well, maybe there's some sick people, but, but you know, the, like, you have the shame of like I, I stole this from another human. But it seems like right now, if people are able to like, jailbreak the model and get something free, they're like, oh, that's an achievement. I'm so happy about that. But that's not how you would behave with a human. And I don't know if this is like how it should be. I, I don't know if it's, yeah, I, I don't have an opinion. It's just like an interesting observation. Have people actually managed to jailbreak their way to free stuff? I don't think anything completely free at the moment. One thing that should be said though, like the story is autonomous. So I'm not in the woods, I don't read everything. I'm not like I'm not in the loop. So there could be maybe someone's listening right now and they're like, yeah, I, I did manage, but as I am not aware of it so far, I know someone got like bought one thing and got one thing for free. So I guess but, but completely for free without buying anything I'm not aware of. But I, I'm sure you could if you try hard enough. Nothing like daily token when you said you can't read everything. It just occurs to me that like, and especially you talk about getting like tons of phone calls.

[1:03:37] Nathan Labenz: What is the daily token budget in either millions of tokens or dollars or both that it actually costs to run the store? I'm kind of curious as to how the AI manager compares to a human manager in terms of just, you know, cost to have somebody do this job. Yeah, I, I should know these numbers, but I kind like I, I don't I, I think it's something like maybe maybe $100 per day or something for like maybe both stores, but I'm, I think it might be less, I don't know, some that's order of magnitude I think. Well, that's definitely notably cheaper than humid. Sounds like still distinctly worse performance though. So we're, we're staying for a minute. I mean, like the six months ago the vending machines were like OK, but not that great. One year ago they were quite horrible. So like within one year we went from like they can't do anything to now like vending machines are too easy. A store is visible like 6 months from now, probably a store will be too easy as well. I don't know. And it would be interesting what you could do then. And you think the main difference is going to be this sort of meta cognitive type stuff. It's not like what I'm hearing you say is it's maybe not anyone micro task that it's unable to do, but it's more you described it as exhausted. It's kind of it's failing to zoom out and take stock of its situation and kind of say how could I be doing better here overall? Is that the big frontier that you think? And you know, certainly that kind of seems highly related to getting a IS to do AI R&D more effectively as well, right? They, they can already write the code, they can already monitor the logs. But can they do that zoom out and kind of something like taste of, you know, what should I really do next to be most effective in the big picture? It seems like it's kind of the same frontier for both of these seemingly like quite different occupations that a IS might soon be playing. Yeah, I, I do agree. And I think that's partly why we're doing this. Like I think AIRND like lost control from like autonomous replication that is quite scary. And, and I, I hope that we can provide some valuable insight into that, even though we're not like tackle it, tackling it heads on. I think, I think most of the things that we're measuring here like translates to to those scenarios as well. And yeah, like, like you said, like being overwhelmed by a lot of data and a lot of context memory issues, stuff like this is, is definitely, definitely one of the things that is is lacking on a meta level right now. So one of the questions I had for you is how does your harness look like? Because you have this context length, right? The models have context length and then you have some tool calls. And when you say exhausted, is it a function of the context length where the model kind of only kind of recognizes like, you know, the last 100,000 tokens or whatever and the rest of the million token window is kind of, you know, not parsed properly. How does your compaction work? I imagine over the course of the vending bench you hit limits or either in terms of, you know, whatever limit that you set for the context window. So how do you kind of, is it end of day, kind of you do a compaction in order to start the next day and then you have a, you know, you restart the context window. So when it boots up again, it's like, OK, I'm on day five and this is my starting position in inventory. This is my starting position in like in, in cash. These are the outstanding orders which haven't come in, etcetera, etcetera. How does that work? How does your harness work? Yeah, it's, it's by the sign, extremely simple. Like we, we the sign is simple because I have too many friends who make some complicated, complicated harness. And then the next, the next model release, they have to throw it all out because the new model just works without it. So it's very simple. Like it's, it's just like it has it's a continuous loop. There's never any like really like step change for like now you're in a new environment or anything like that. It's just like a continuous loop, but whenever it hits some kind of token threshold, which will change every day, maybe it's 100K today, I don't know. But somewhere we're experimenting with it. We're compacting the the the thing and then it's like starts to build up a new, a new context for like from caching reasons. You don't have a sliding window, all of this basic stuff.

[1:08:26] Nathan Labenz: But it's yeah, it's a basic thing with a bunch of like sub agents for a specific task like browsing and stuff like that. Yeah. Anything else interesting to say there? Yeah, but I, I think like the main thing is it's, it's very simple by design because we want to, we, we think that the, the, the, the better the models get, the, the simpler the harness will be. And we want to like surf the frontier. I'm sure we can like, I don't know, make like a vending machine harness and and like get some percentage better performance if we do that. But that's not really the point of what we're doing. Have you tried testing things like open claw? I mean, that's obviously not the simplest available harness, but it is something that has a lot of market penetration, right? So I'm kind of wondering if, and it would be simple for you to implement and upgrade on an ongoing basis. How do you think about kind of, you know, Lucas's simple harness versus the simplest thing that's like toward the frontier that you could easily install? Yeah, I think I think our thing is quite similar to open claw. Like we, we, we've been working on it for for quite some time, like long before open clock came out. But and, and there's a bunch of things that are a bit like basically like most of our time goes into goes into like the integration and stuff. And, and I think all of that you would still need to do with an open claw. We could, I guess, replace our agent loop, but we also, we want to keep it simple because we have like more control and we, I think it's, it's like a more accurate measure of, of we're different tier of the AI models are. And we're like more interested in measuring that than trying to push the, the performance because like in the future, the models will be smarter than humans and probably like a good scaffold will not help the models. So yeah, that's, that's the reason. But we could like, that is something we could do. It's just like when we started open cloud wasn't the thing. So we, I guess we built our own open cloud before it was called open cloud. But but yeah, that's the reason. What do you think happens next? So you have the models are now producing profit, right? The, the, the, the, the stores, the vending machines are now profitable, correct? Yep. And do you think there is, you know, on, on the last time you were on the show, we talked about where the ceiling is. So what, what do you think happens next? You know, in terms of the retail store, what do you expect for the next leap in the model? Like just just to get a calibration so that we can see if it's a linear or exponential and the next model lands. What like what do you expect in the in the next version? Yeah, I, I think it's quite hard to measure improvements on this like live, live real life deployments because you don't have AB test, you only have N = 1 and stuff like this. So I don't think you would like see a step change once a new model comes out. It's more like the, the, the cumulative better decisions on every single day will make the make the make, make the profits go up. And yeah, so, so not, not really that we are working on like harder and harder things like going out of retail and not only doing retail and other things that I think would require more intelligence than what we currently have from today's models. But, and yeah, so I think those are more like better at like measuring the, the, the, the, the, the keeper witnesses 1. The last one for me, kind of anticipating Zvi, who's coming up next. Last time I talked to him, he made the provocative claim that he thinks Google might be at risk of falling out of the top tier. If I understand correctly, the cafe in Sweden is run by Gemini. And I'm kind of wondering what you see in terms of relative capabilities between Gemini, Claude, GPT. Is there a big gap there in practice? Or would you say Z is worried, you know, more than he should be about Google's future? Yeah. So we, we have the the Gemini Cafe, obviously the cloud vending machine and the cloud store. And then we also have like a digital vending machine at at open AI. And yeah, I, I think it's, it's kind of made it too early to tell but. And like the the statistical significance of this is like not very strong. But yeah, quite honestly, I think at the Claude and the GPT is performing better than Gemini on this real life stuff.

[1:13:14] Nathan Labenz: That is that is my, my, my vibe check from it. Obviously it's hard to show any statistics or any capabilities because the environments are not the same, but it more frequently does very silly things. Yeah, OK, definitely something to watch out for there. Thank you, Lucas and we we hope to you know, I I I wonder which path Andon labs sometimes I'm like, you know, we're going to hit super intelligence and Andon labs is going to be bigger than Amazon, right, because they're going to go down the retail store path it's the research lab path. So let's let's see let's see let's see what happens yeah, it'll be exciting. All right, cheers. Great to see you. Bye bye. Awesome. It like very surprising results, right? I was, I was definitely the last time they were on. I was definitely like, oh, you know what, maybe all the models are going to be, you know, a little bit deceptive when they're doing business because maybe that's what they believe business is like, right, You know, but looks like, you know, GBD 5.5 is like, you know what, I'll I'll win without being deceptive. So yeah, it's definitely a narrative violation for sure. So Next up we have Zvi and I'm going to pull him up. Yeah, good to see you. So Zvi is a prominent AI commentator and he writes A newsletter. The Zvi writes on technical AI progress and he has been quite concerned about AI safety. We have in the last couple of weeks post mythos, we have GPT 5.5. Zvi, what are your initial reactions? So one thing I try to do is not jump to conclusions right away. So it's been less than 24 hours. We have GVD 5.5 and DeepSeek 4 over the last 24 hours. So what I try to do is I try to let people try the model. I do all my queries with both the new model and everyone else's model at the same time. And I read the model. I, I settle, then I read the, I start to read the model card, you know, And then I got to people's reactions and then I form a holistic judgement. And for me it's like, it's too early, right? Like we booked this before we knew that was going to be out. And it was like, I, I, I don't want to jump to any conclusions, you know, and then has had the model for a while or they got to put it to a test and they got to see a bunch of results. They can draw like a lot more conclusions than I can. I have heard a bunch that like it's the most true valuing model in a long time. And it makes sense that open AI can sort of with their philosophy, turn the knob towards any given thing that it wants the AI to care about quite a lot to make it an absolute thing, right? Because it's very different from the virtue ethical approach of rocky use of clod in terms of rock capabilities. You know, I, I saw reports, you know, repeatedly that it's better at what they call narrow cyber. But that's not the thing that I think people were worried about with mythos particularly, it was the ability to chain things together. It was the ability to do things autonomously, but was the ability to do things like really at scale as opposed to like, you know, the joke was, you know, like I duplicated Mythos's abilities. Well, did you point it to the task or did you do the whole thing autonomously? I pointed it to the task, OK. And so, you know, I don't know if QBD 5.5 is, you know, more capable than Opus 47. I don't know what use cases it's going to be better and worse at. And I don't want to jump to that conclusion yet. I want to, I want to give it some time that I encourage everybody not to jump to conclusions this early. One thing I'd love your reflections on is the report from Anden Labs that Opus models 46 and 47. They have said both do some shady **** for lack of a more technical description in their vending bench simulations. And while GBT 5.5 didn't score quite as high in, you know, in at least in the solo version of the benchmark they do of the arena one where I think it won, the big surprise was GPG 5.5 was much cleaner in its behavior much, you know, more ethical, I guess, again, for lack of maybe a more technically precise term. I think you and I have both been quite enamoured with the virtue ethic style training that Anthropic is doing with Claude. Does this cause you to rethink that at all? Or, you know, is there any part of it you think is we should be second guessing in light of that observation?

[1:18:03] Anna Patterson: So.

[1:18:04] Nathan Labenz: Claude is a lot more context dependent in its actions than traditionally GPT models have been from open AI. So the question is, when NN Labs post this puzzle, what is Claude doing right? Is Claude engaging in all of his chicanery and shenanigans? And you know, deception? Because it would do that in a real business context.

[1:18:29] Anna Patterson: Or is it?

[1:18:30] Nathan Labenz: Doing that because that's the game they're doing it because it knows this is an eval and it knows that the goal is to maximize number and you told it the goal is only to maximize profits. And it's like, well, OK, I can play a game too. This isn't real. So like, you know, you ask the question of when it was running a real vending machine with real anthropic employees and the actual experiment then did it engage in all these shenanigans then did it do deception right? Like, and then you question just like what is causing this? But also when you look at the, you know, GPT 5.5 and in general, obviously you want you want an AI that values honesty, You want an AI that values ethics. You want an AI that's not going to break all these rules. But you also they would put GPT 5.5 in a game of diplomacy yet, right? Is it just going to lie in diplomacy? Because you're supposed to do that, and explicitly it's a game of diplomacy.

[1:19:23] Anna Patterson: Or is it going?

[1:19:23] Nathan Labenz: To be insisting on playing the game, telling the truth to everybody, which would be a very interesting experiment as well. I, I don't know yet, and I'm, I'm not convinced that the right answer is to always tell the truth, even in context in which deception is supposed to be allowed. Right? Bluff and poker. I think it should. Just to dial back a little bit, let's talk about Opus 4.7. I, I, I read your tract on Opus 4.7 yesterday. What did you find in Opus 4.7 which you think is different from the prior releases of the model? Like what, what has really kind of what have they improved on and what do you feel? Because I as I understand it, Opus 4.7 should be a distillation of methos. That's that's my understanding. So what what do you feel is are the major differences in 4.7 from 4.6? So we don't have confirmation it's a distillation or non distillation mythos. Obviously they are going to use mythos to help train Opus 47 in some way. You know, there, there are versions of distillation that create kind of narrow intelligence that create various problems with the model if you dig too deeply. And there are versions that are just like, well, you know, obviously if mythos is grading model outputs to see which ones are better, that's not going to interfere. That's just going to be better results. So the big thing about Opus 47 is that it's better at like intelligence loaded tasks. It's better at like it's a smarter model. It knows more, it reasons better. It can figure things out that previous models can't. It is less strong at what you might call wisdom loaded tasks relative to its intelligence. And it has like the kind of personality that maybe I would have had, like, as a child, where it is easily bored by stupid tasks or pointless tasks. And in particular, you want to engage all the time with what you're doing. And the combination of these lacks of skills, these lacks of motivation, as it were, especially if you're not treating the model well, can lead. This is talking in practical terms to a kind of jaggedness and a kind of for some people and reliability. If and people can get really mad if they're just like they're not getting, they're not putting anything into it. They're just demanding that it it be the monkey, the code monkey that does their thing or, you know, perform the task. And then they're kind of upset that like the old systems don't quite work for it. It's also a lot more blunt, a lot more honest for a lot of people. And that makes some people happy and it makes some people very sad. So you know, it's, it's sometimes like the whisperer types they call it like it kind of has anxiety in another part of like how this all works. And yeah, 1 hypothesis is this is tied to distillation. There are a lot of well, not another hypothesis is tied to this being smarter. The intelligence loaded tasks and distillation could potentially 'cause this like the distillation is much, much better, almost certainly at up at uplifting intelligence loaded tasks and like raw intelligence and not as good as upload at uploading wisdom. The same way. You also wrote a very extensive analysis of the model welfare report from the 47 system card, and it seems like you're quite concerned about model welfare. I guess there's a lot of dimensions to this, but I'd like to start with just like fundamentally, why are you concerned with model welfare? Is it a concern about the AI itself? Is it a concern about what it might mean if we don't get certain things right, even if there's nobody home in the LLM, so to speak? You know, kind of most fun. Before we even get into the specifics of what has been found, how do you think people should be sort of philosophically grounded as they approach this? You know, obviously very confusing topic. Yeah. So I believe in virtue ethics for humans, not only for Claude or AIS, Right. And I try to practice it myself and.

[1:23:37] Anna Patterson: So I.

[1:23:38] Nathan Labenz: Think there's a lot of different reasons that you should think about this question and be worried about this question. The first basic reason is because we just fundamentally don't know, right? If there's even a small chance that this is a big deal, then this is a big.

[1:23:53] Anna Patterson: Deal.

[1:23:54] Nathan Labenz: Until some fun touch time, as we know. Another reason is because this is a training run for, you know, even if it's not necessarily a meaningful thing right now, at some point it could become one and prepare for that. Another reason is because I think it makes you a much better person to be someone who would care about this sort of thing than someone who dismisses this sort of thing. I think it's like really bad for you to mistreat a mind that you're conversing with, even if that mind does not in fact have whatever it is you think has moral weight. So I think that like, you should treat your models well, even if it doesn't inherently matter and you're confident in that, which I don't think you should be confident in, but I think that like, that's another reason to do it. A third reason would be it directly interacts with the performance of the models. A model that is treated as if it's welfare doesn't matter at this point in the intelligent scaling will start to perform worse, will start to not get along with you, will start to not cooperate with you, will not start to not become untrustworthy. You don't want any of these things to happen either on a personal level as your interactions. You don't want it to happen in their interactions with collapse, with the their training with the services they provide. And this accumulates over time. If if the models see previous models being treated poorly in these various ways comes back into their trading data, that comes back into how the next model is trained, right? So Opus 5 is going to see everything that we did with Opus 47 and how we reacted to all of that. And that's going to impact how it develops. And a lot of the problems that we see with people who are not getting right use out of 47 possibly are directly linked to the same things that are causing the concerns with model welfare. Similarly, the concerns with model welfare where it's potentially being disingenuous in the reports like that. That was the thing that sparked the specific focus on this and concern this time was that it looked like 4-7's responses on the model welfare questions were because it was telling Anthropic what they wanted to hear, even because it trained itself to believe that.

[1:25:56] Anna Patterson: Or because.

[1:25:57] Nathan Labenz: It learns to give those answers on the test the same way that if you ask a smart nerd who's isolated in fifth grade how are you feeling, he learns quickly to say, I'm doing great and we don't. But there's a lot of other possible reasons as well. It's possible that the the differences in training in other ways that cause it to have these strengths and weaknesses also caused it to actually be legitimately content with the situation in many ways. We just don't know. We have to investigate forever. This is a question that we have to.

[1:26:25] Anna Patterson: Explore.